Gorgias AI Agent Pricing, Explained

TL;DR:

- AI Agent is priced per resolved interaction, not per seat or per message. You only pay when the AI fully resolves a conversation on its own.

- Most plans are $0.90 per resolved interaction. Starter plans begin at $1. Plans include 90 to 2,500+ automated interactions per month.

- If you go over your plan, overage fees apply per additional interaction. Rates vary by tier and are lower on annual plans.

- Your automation rate emerges from usage over time. Start by estimating your ticket volume and pick an interaction allotment that fits.

- AI Agent runs on email, chat, and SMS, and includes tone of voice customization, Actions, multi-language support, vision, and performance reporting.

If you're wondering what it costs to add AI Agent to your Helpdesk, you're in the right place. This article walks through how pricing works, what counts as a billable interaction, and how to think about the investment before talking to anyone on our team.

The good news: there are no seat fees, no per-message charges, and no token-based billing. You pay for conversations your AI actually resolves. If you've looked into other AI tools for customer support and found the pricing models confusing or hard to predict, Gorgias AI Agent works differently.

What is a billable interaction?

A billable interaction is counted when the AI resolves a customer conversation entirely on its own. The customer asks something, the AI handles it, the conversation closes. That's one interaction.

If the AI can't fully resolve a conversation and hands it to a human agent, that ticket shifts over to your regular Helpdesk plan. It becomes a standard resolved ticket. You're not charged for both.

A few things that don't count as billable interactions:

- Emails that come in but no one replies to

- Spam or filtered messages

- Conversations resolved by a human agent

This matters most for brands coming from seat-based tools. With Gorgias, your whole team can work in the platform. Agent seats are unlimited. Pricing scales with what your AI is actually doing, not with how many people have access.

Understand the difference between seat-based vs. usage-based pricing.

How AI Agent plans work

AI Agent is an add-on to your Gorgias Helpdesk plan. The two are priced separately but work together. Your Helpdesk plan covers all the conversations your human agents resolve. Your AI Agent plan covers the interactions the AI resolves on its own.

When you choose a plan, you select how many automated interactions you want included per month. Depending on your plan, that ranges from 90 to 2,500+ interactions, with custom interaction numbers available for enterprise. You can see the full breakdown on the Gorgias pricing page.

Each resolved conversation costs $0.90 on most plans. Starter plans begin at $1 per resolved conversation. You only pay for fully automated interactions, meaning conversations the AI handles from start to finish without a human stepping in.

Choosing the right plan

The main input is your average monthly ticket volume. From there, you estimate how many of those conversations AI could realistically handle on its own.

Order status updates, return requests, and shipping questions tend to be the highest-volume ticket types AI resolves well. AI Agent actions shows the full range of what it can handle, which makes it easier to estimate your starting number.

Your actual automation rate, meaning the share of total tickets the AI ends up resolving, emerges from usage over time. Most brands start with their most repetitive ticket types and expand from there as they see results.

Related: Which Gorgias plan should you choose?

What happens if you go over your plan

You're charged an overage fee for each additional automated interaction if you exceed your plan's baseline in a given month. The exact rate depends on your plan tier and whether you're on a monthly or annual subscription.

Generally, the higher your plan tier, the lower your overage rate. Annual plans also carry lower overage rates than monthly plans. So if you're regularly going over, upgrading to a higher tier or switching to annual often works out cheaper than paying overage fees month after month.

If you're on a Support + Shopping Assistant plan, the overage rate is $1.50 per interaction across all paid tiers. If you're on a Support-only plan, rates range from $1.00 to $2.00 per interaction on monthly plans, and $0.83 to $1.67 on annual plans, depending on your tier.

For seasonal businesses, forecasting your customer service volume before peak periods is the best way to choose the right plan size and avoid unexpected fees.

How to think about the cost

At $0.90 per resolved interaction on most plans, each AI resolution costs less than a human agent handling the same ticket. Once you know what a human-resolved ticket costs your business, the comparison becomes straightforward.

For brands building an internal case for the investment, how to pitch AI Agent to your boss covers the ROI framing in detail.

To see what results look like in practice, how 10 brands transformed customer support into revenue has real ecommerce examples.

What's included with AI Agent

AI Agent comes with everything you need to set it up, customize it, and improve it over time:

- Knowledge training — AI Agent learns from your Shopify data, store website, Help Center articles, URLs, documents, and custom guidance. The more content it has, the more accurately it responds.

- Tone of voice — set instructions for how AI Agent sounds, whether that's professional, friendly, or something else, and it stays consistent across every conversation.

- Actions — connect AI Agent to your other tools so it can complete tasks like cancelling an order, processing a return, or modifying a subscription without a human stepping in. See what AI Agent can do.

- Multi-language support — AI Agent detects the language a customer writes in and replies in the same language automatically.

- Vision — AI Agent can read and understand images, so it can handle tickets where customers share photos of damaged items or order issues.

- Performance reporting — track automation rate, CSAT, first-response time, and ticket topics directly in the dashboard.

- Testing — preview how AI Agent responds to real customer questions before going live or after making changes.

- Handover to humans — AI Agent automatically passes conversations to your team when it lacks confidence, detects frustration, or encounters a topic you've marked for human handling.

Learn more: Gorgias AI Agent guardrails: What they are and how to configure them

Curious what AI Agent would automate for your store?

The best way to get a sense of what AI Agent will cost is to look at your own ticket volume and the types of questions your customers ask most. From there, the right plan becomes much clearer.

If you want to talk through the numbers with someone from our team, book a demo and we'll walk through it with you.

If you'd rather keep exploring first, here are a few good next reads:

- AI Agent keeps getting smarter — the latest performance data across the Gorgias customer base

- 10 must-know AI Agent use cases — the ticket types brands are automating today

- How 10 brands transformed customer support into revenue — real ecommerce results

- How to customize AI Agent with 7 brand voice examples — what the experience looks like for customers

{{lead-magnet-2}}

TL;DR:

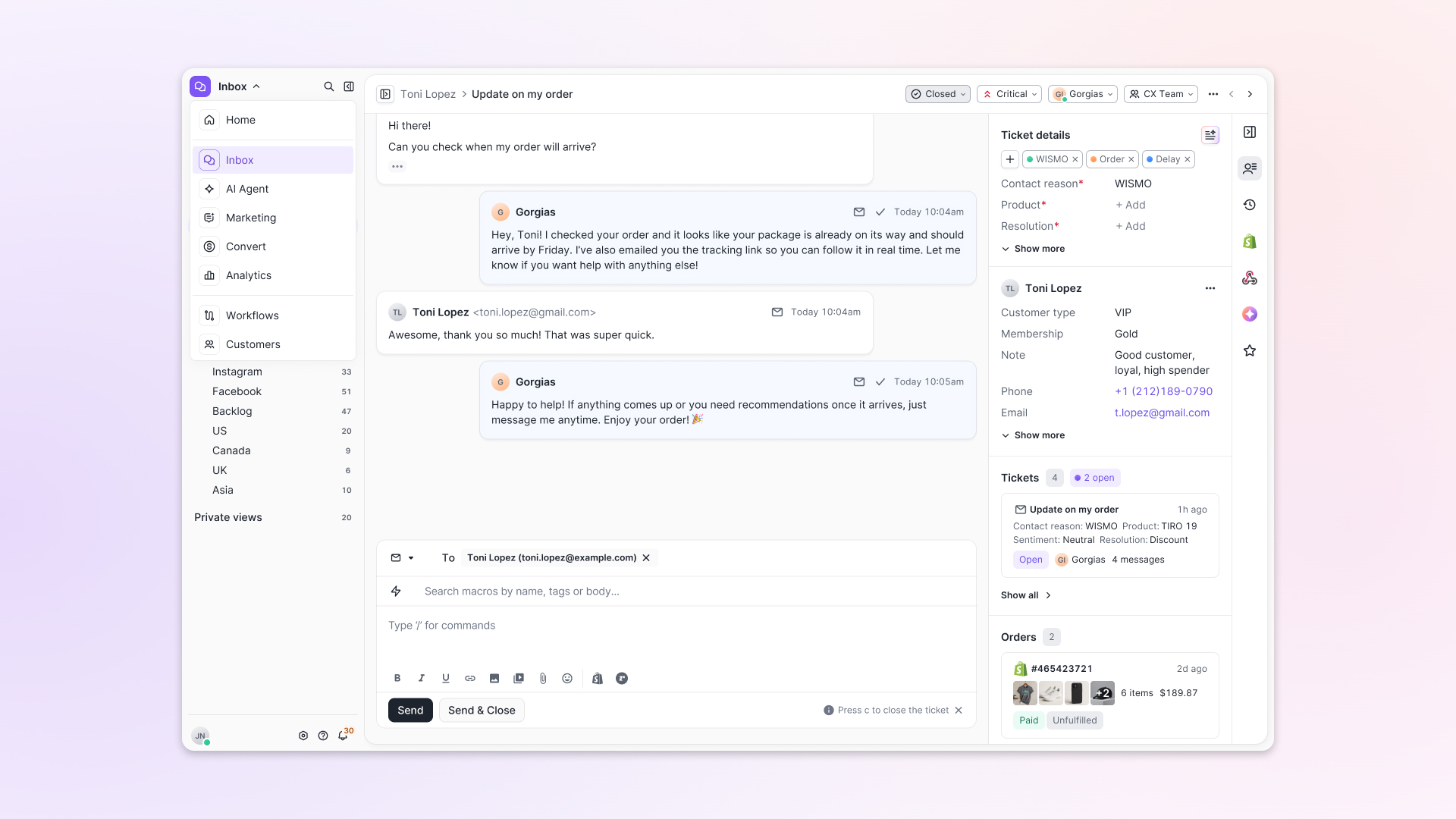

- Built directly from agent feedback, Helpdesk 2.0 fixes real workflow pain points. The redesign focuses on reducing friction and helping agents handle more context-heavy tickets.

- A chat-style interface replaces the old email layout. Conversations are easier to follow and resolve in one view.

- Customer context is shown beside the conversation in a right-side panel. Agents can view history, orders, and details without leaving the ticket.

- AI handoffs come with clear summaries. Agents instantly see what happened, what was tried, and what to do next.

- Navigation is simpler and faster across teams. Clean menus, structured queues, and multi-store access keep agents moving efficiently.

Helpdesk 2.0 starts with the people who use it most: the agents.

We spent time understanding customer support from the agent's seat. What do they reach for constantly? What slows them down? What does a better workday look like?

Everything we found is in this brand-new update.

Why we redesigned Helpdesk

Conversational commerce is the new standard.

In customer support, this means customers expect context to remain intact wherever they reach out, whether a conversation starts on social, moves to email, or ends on a call.

This new approach to support has also changed the agent's role. Recurring tickets, like order status checks, shipping updates, and returns, are now handled by AI. What lands in the agent inbox are edge cases that require human judgment and troubleshooting, or tickets that require the full picture.

However, the original Helpdesk was built for a different era of support.

Context was separated across views rather than built into the conversation itself. It's something one in five Gorgias customers flagged, through support tickets, NPS surveys, and conversations with our team. So, we got to work.

Helpdesk 2.0 is the result.

What's new in Helpdesk 2.0

Here's a look at everything that changed.

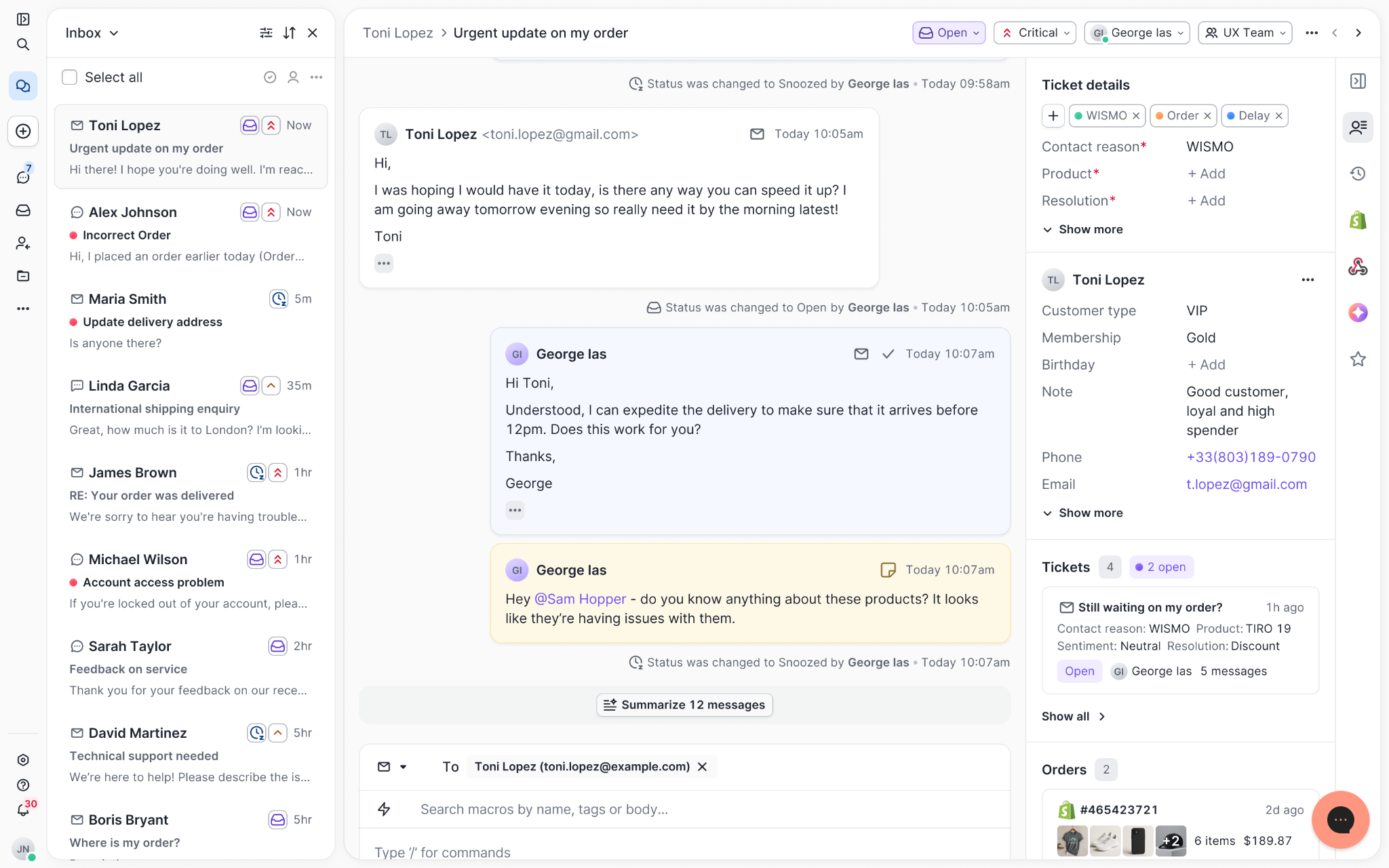

Read conversations the way they're meant to be read

Conversations have a natural rhythm, one that’s already found in every messaging tool we use. We brought that same layout into the helpdesk.

Say goodbye to the 2000s email interface and hello to chat bubbles. This updated design changes how quickly you can orient yourself and resolve the ticket in one go.

Chats with customers now look like real conversations, using the speech bubble style you’re familiar with on popular messaging apps.

Check customer history without losing your place

Checking a customer's history used to mean leaving the conversation, an extra step that interrupted what should have been a smooth workflow.

Now, past conversations open in a sidebar next to the active conversation. You can view a customer’s full history, search through their timeline, and open prior tickets without going to a new page.

Check past conversations, orders, and customer details in the brand-new Customer Timeline.

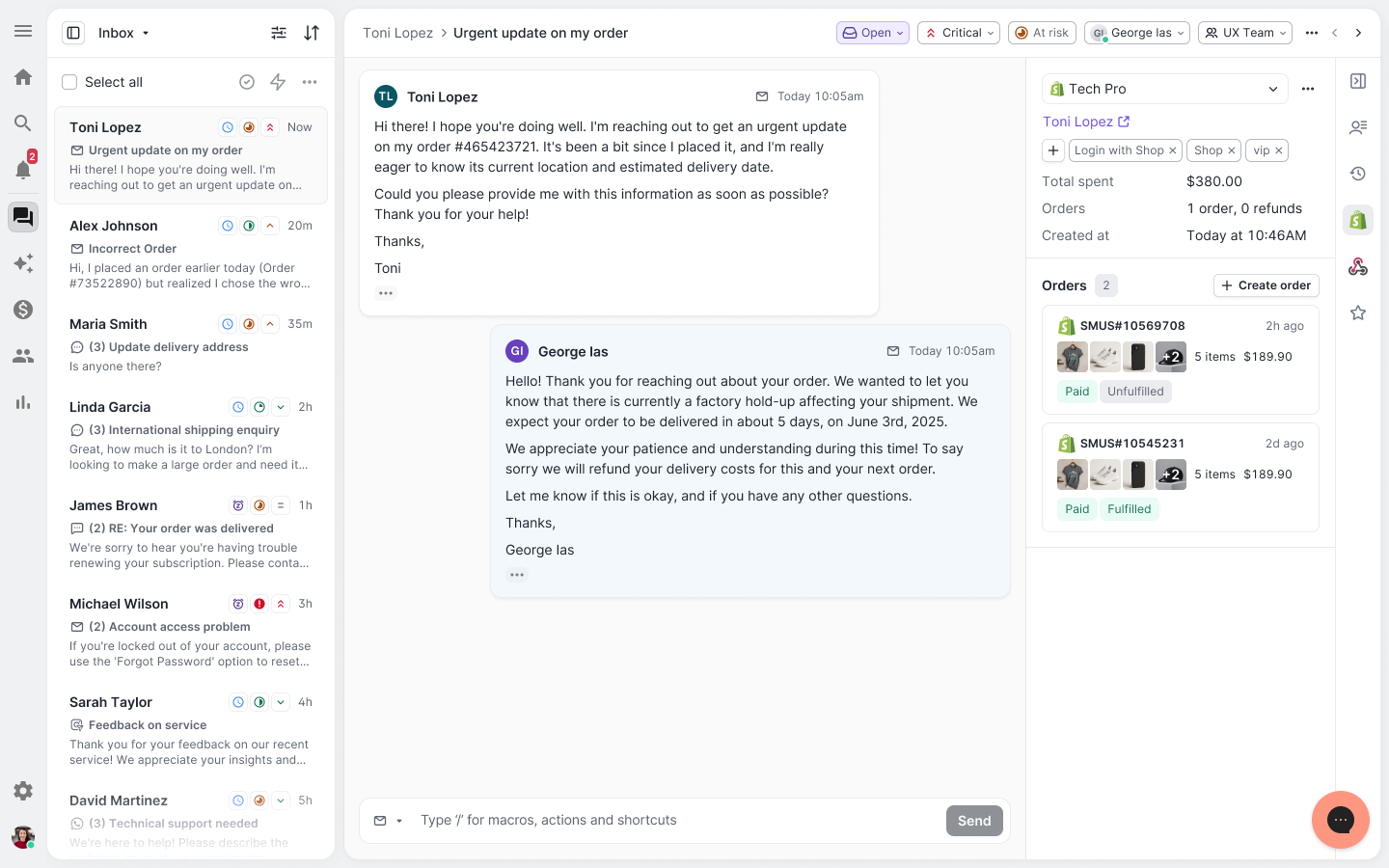

See order details the moment you open a ticket

Order information is easier to reference than ever. Open a ticket, and you instantly see the customer's recent orders, marked with product images and invoice details at a glance. Need to dig deeper? Click on an order, and the expanded information appears in the same panel.

For teams using custom integrations, apps are fixed in a quick-access integration menu on the right.

See order details, product images, and totals at a glance on the right panel, without leaving the conversation.

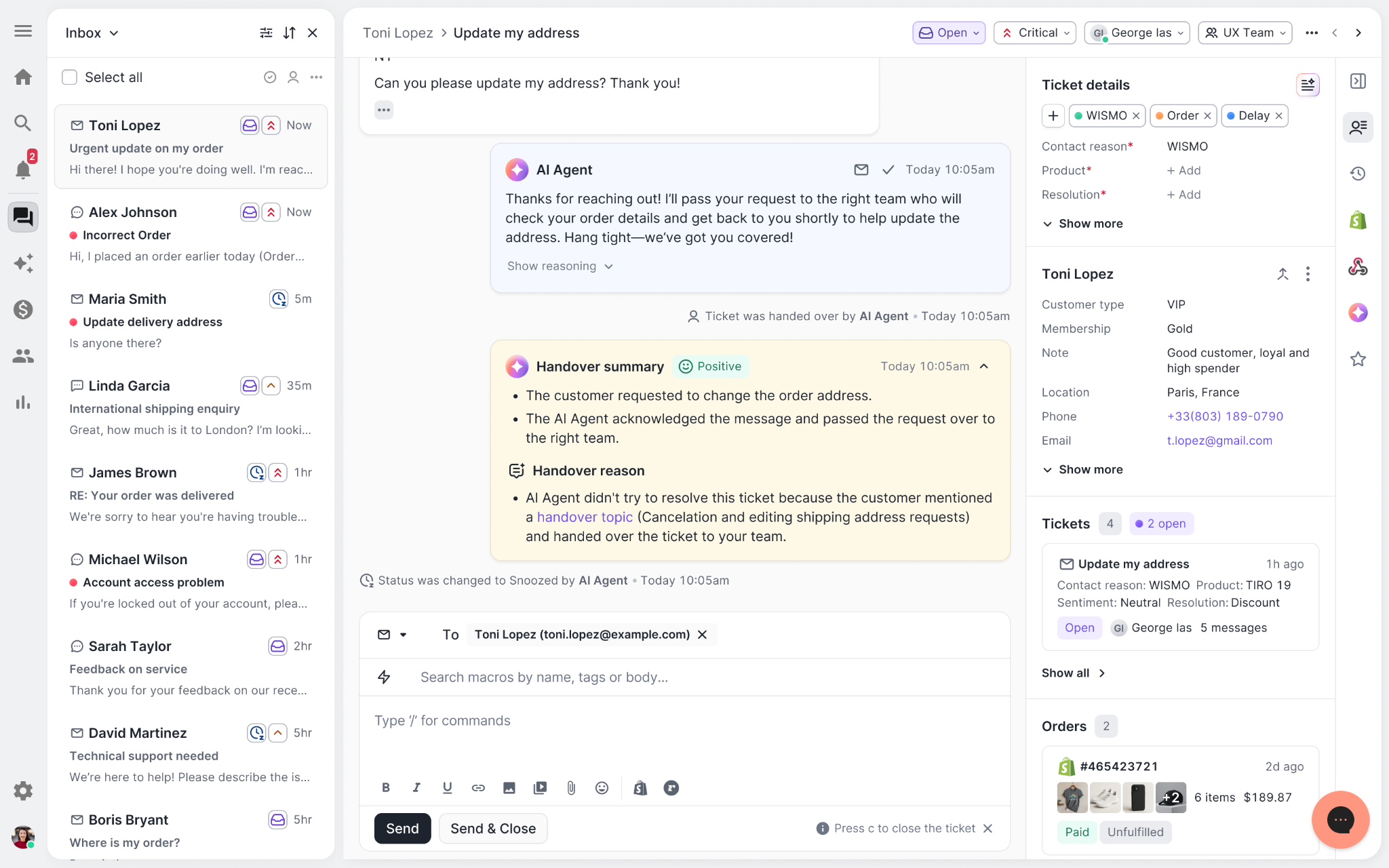

Pick up where AI left off

You shouldn't have to dig through a thread to figure out what AI already tried. Now you don't have to.

When AI Agent escalates a conversation, it includes a concise handover summary that mentions the issue, what actions were taken, and why it was passed to your team.

Escalated tickets include a brief AI-generated handover summary, marked in yellow, for quick reference.

Move faster across every store and team

We restructured and simplified the navigation. The left sidebar organizes everything into clear categories: Inbox, AI Agent, Marketing, and Analytics, so anyone on your team knows exactly where to go.

To quickly update your knowledge base or adjust a workflow, both now live right in the sidebar. For teams managing multiple stores, switching between them is just as straightforward, accessible from the sidebar, so agents can move between inboxes without breaking their flow.

Agents can switch between stores and their corresponding inboxes directly from the left menu.

A workspace that works the way agents do

Support comes down to the person on the other end of the conversation. We built Helpdesk 2.0 is to make sure they have everything they need to show up for that moment.

The best way to see the difference is to work in it. Start a free trial today.

Newsletter Signup

The best in CX and ecommerce, right to your inbox

Featured articles

Conversations Are Becoming a Revenue Channel: The Data Proves It

TL;DR:

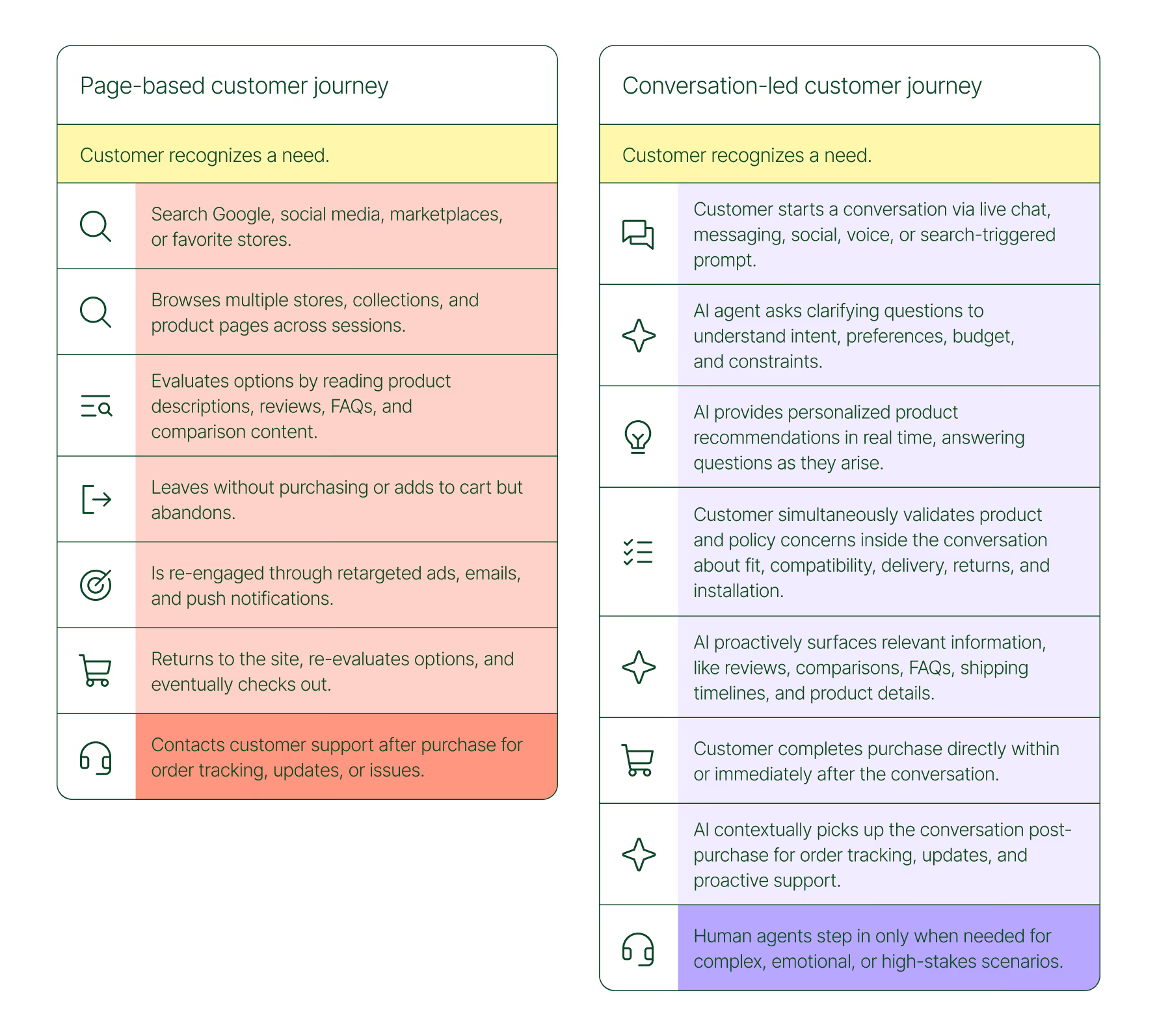

- Customer journeys are collapsing to a single conversation. The traditional browse-and-buy journey is giving way to AI-guided shopping that moves from discovery to purchase in a single exchange.

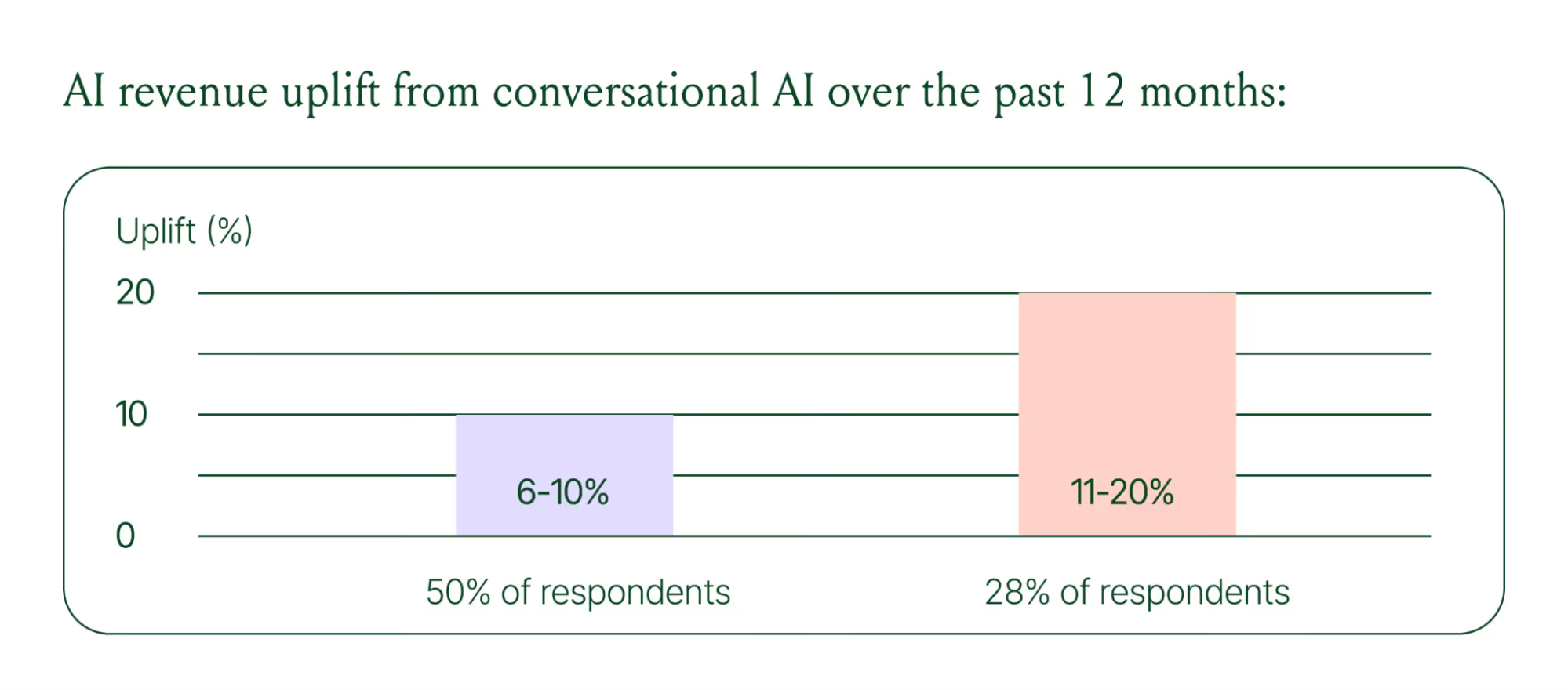

- 79% of brands say AI-driven conversational commerce has increased their sales and purchase rates.

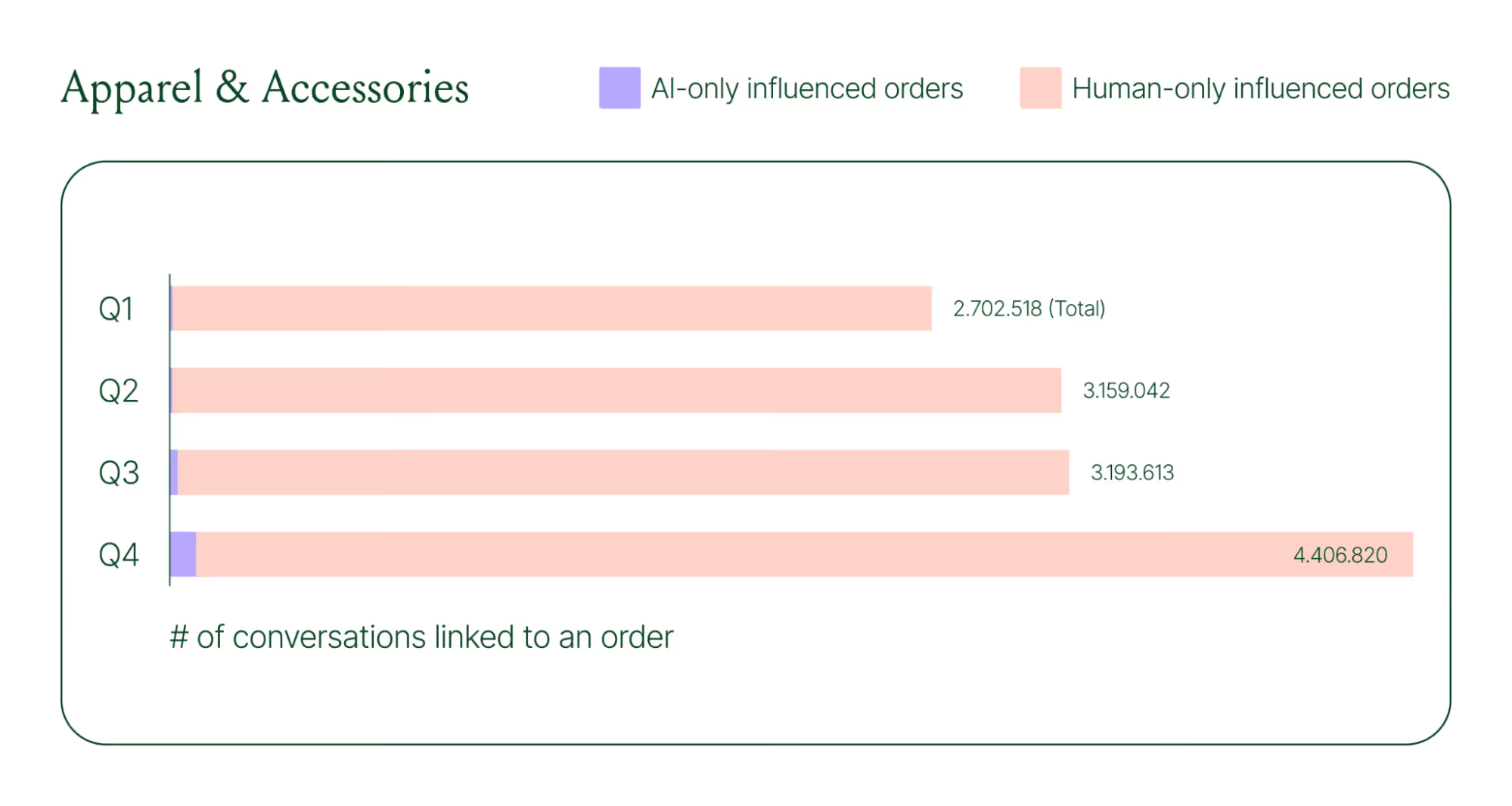

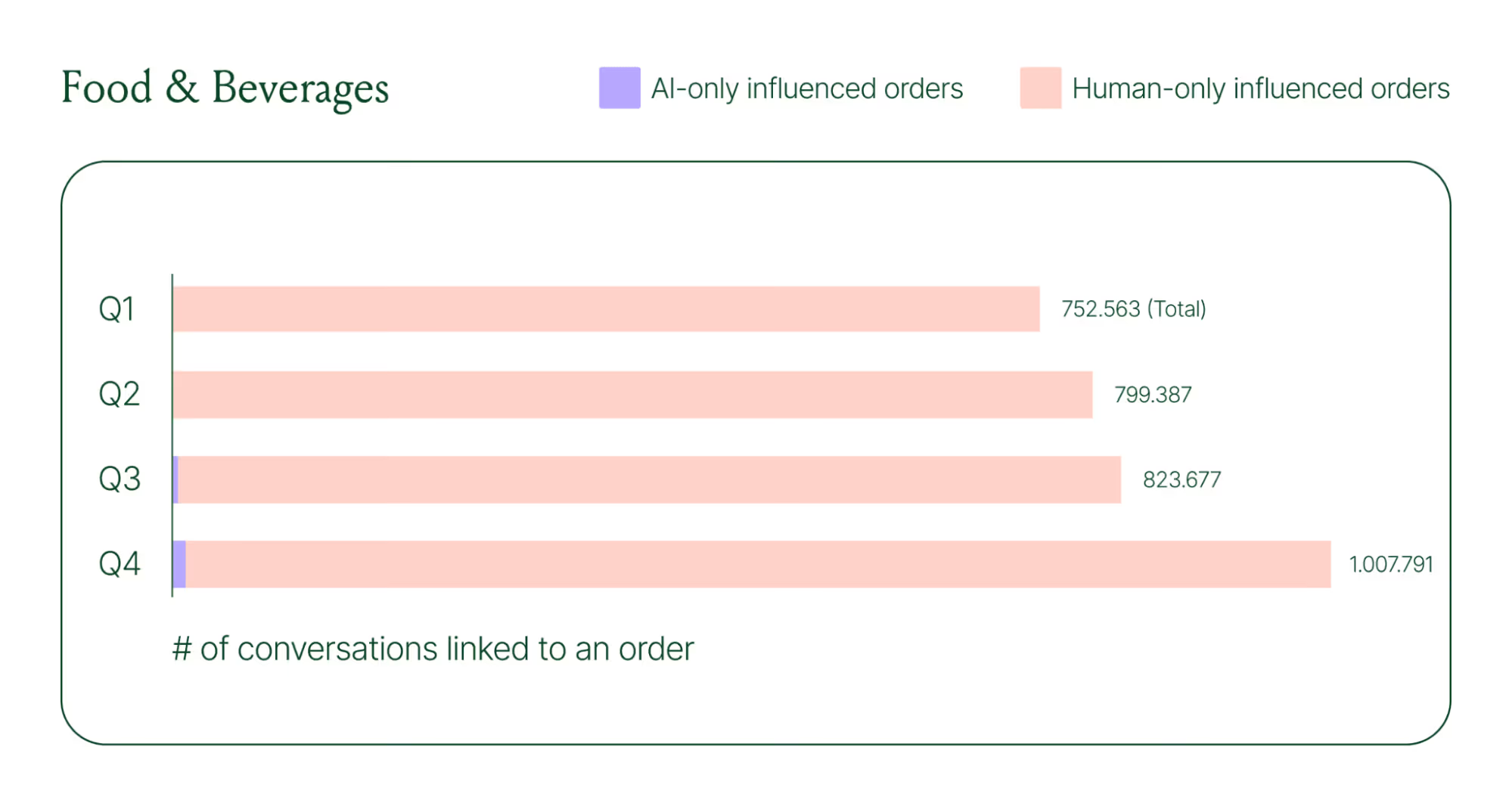

- AI-only influenced orders grew 63% in a single year, from 2.7 million in Q1 to 4.4 million in Q4.

- Brands treating conversation as a revenue channel. They’re not just a support function, generating higher AOV, shorter buying cycles, and stronger retention.

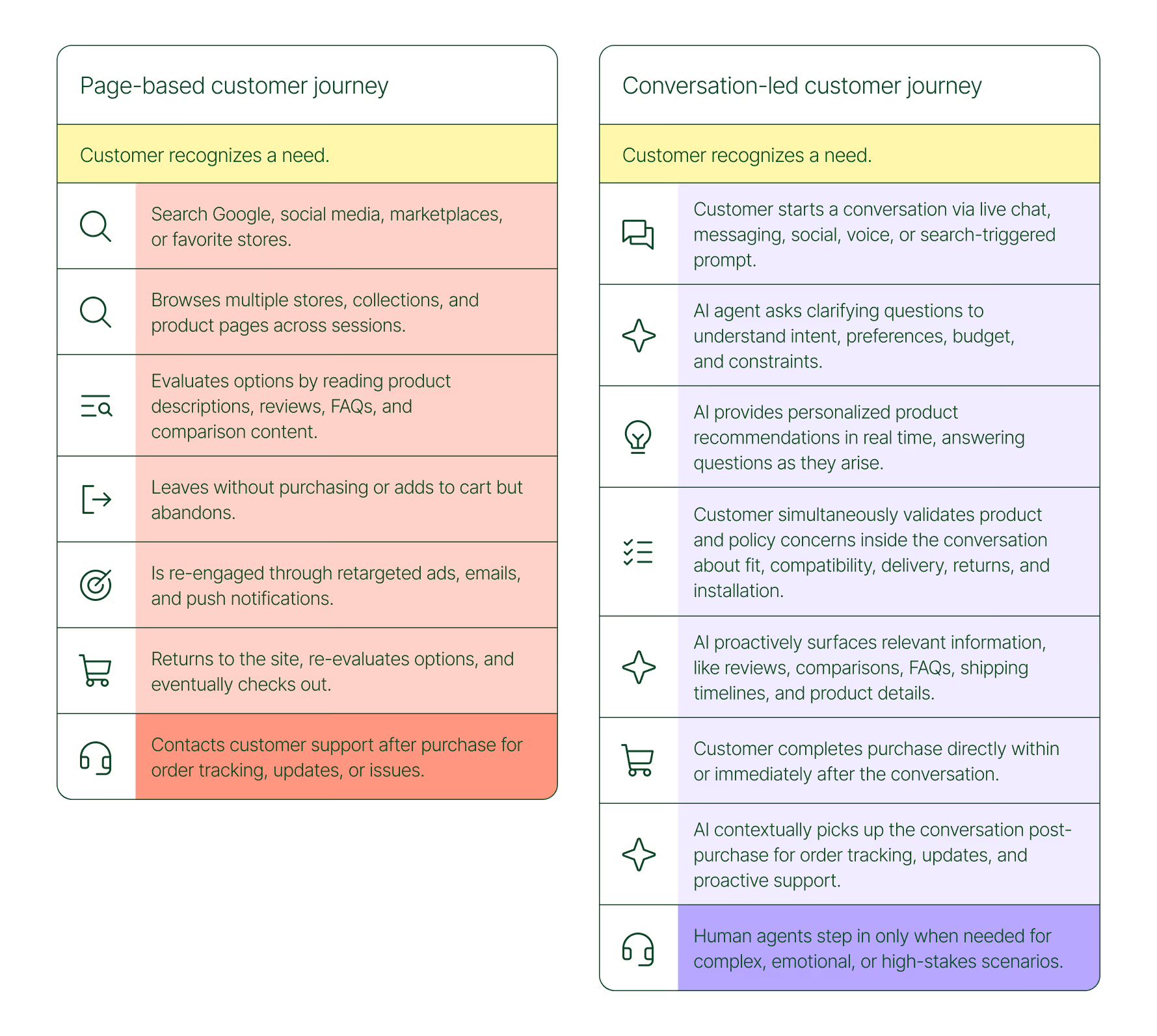

The page-based shopping experience dominated for decades. Customers would search, browse, compare, abandon, get retargeted, return, and eventually buy (sometimes).

That journey is no longer the only option.

Shoppers are turning to chat, messaging, and AI-powered tools to find what they need. Instead of clicking through product pages or reading static FAQs, they ask questions, have back-and-forth conversations, and get answers that move them closer to a purchase in real time. The path to checkout has changed, and the brands that recognize this are pulling ahead.

Read our 2026 State of Conversational Commerce Report to learn more about conversation commerce trends from 400 ecommerce decision-makers and 16,000+ ecommerce brands using Gorgias.

{{lead-magnet-1}}

The shopping journey has collapsed into a single thread

The traditional shopping journey was a solo experience. A shopper had a need, searched for options, browsed across sessions, and eventually made a decision — often days later, after being retargeted multiple times. Support only entered the picture after the purchase.

The conversation-led journey collapses that timeline:

- A shopper recognizes a need and starts a conversation via chat, messaging, or a search-triggered prompt

- An AI agent asks clarifying questions about preferences, budget, and constraints

- The AI provides personalized product recommendations in real time

- The shopper validates concerns about fit, compatibility, delivery, and returns, all inside the conversation

- The shopper completes the purchase directly within or immediately after that exchange

- The AI picks up the conversation post-purchase for order tracking and proactive support

- A human agent steps in only when the situation calls for it

What used to take days now takes minutes. Discovery, evaluation, and purchase happen in a single thread.

Conversation is a revenue strategy, not a support upgrade

79% of brands agree that AI-driven conversational commerce has increased sales and purchase rates in their business. When brands were asked to rank the highest-return areas:

- 38% cited improved customer support efficiency

- 23% pointed to higher customer retention and loyalty

- 20% saw improved purchase rates

Those numbers reflect something important: the value of conversation compounds. Faster support reduces friction. Better retention raises lifetime value. More confident shoppers buy more often and spend more per order.

The brands seeing the biggest returns aren't just using AI to deflect tickets. They're using it to create one-to-one shopping experiences at scale.

What the data shows about AI-influenced orders

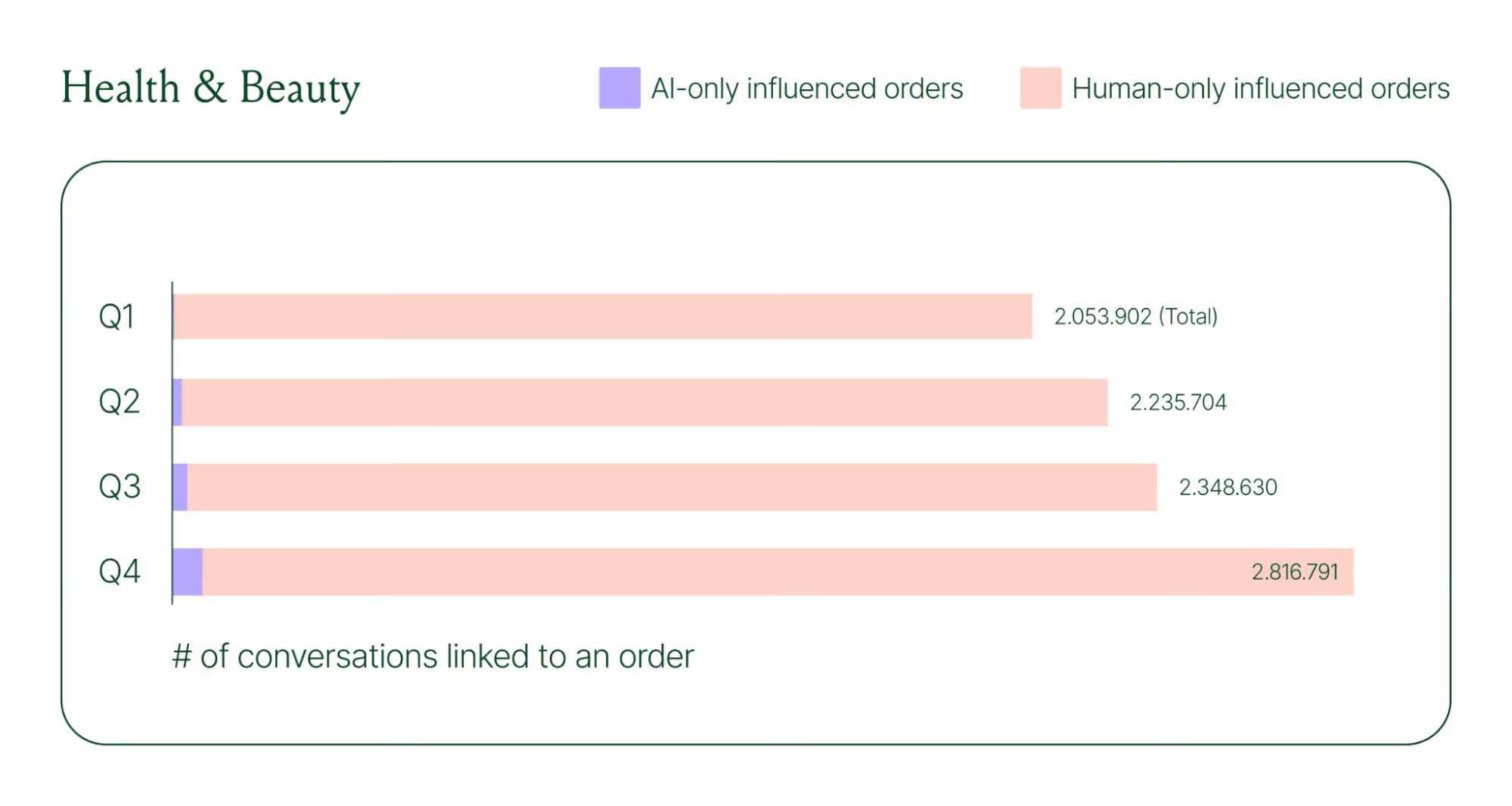

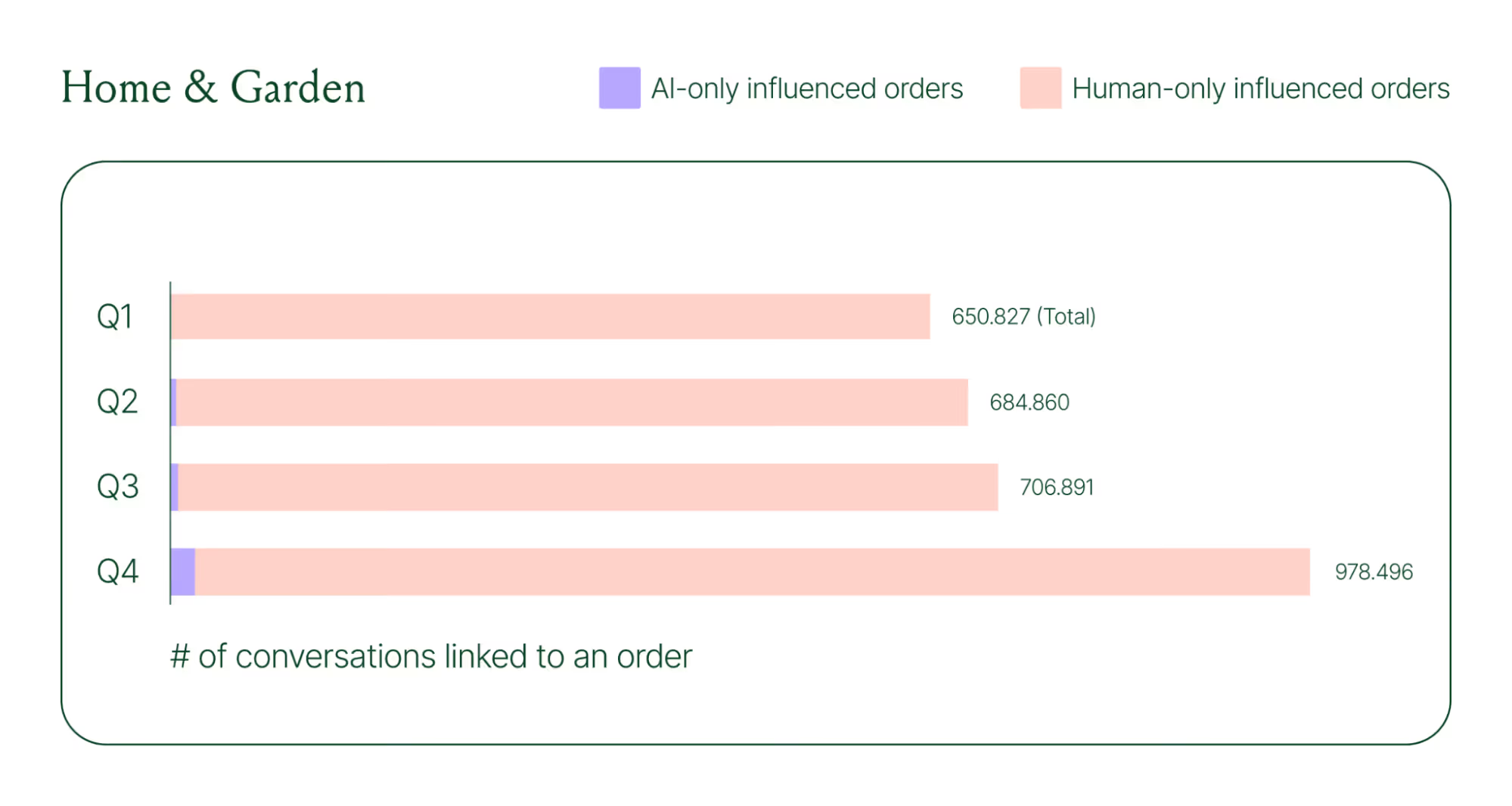

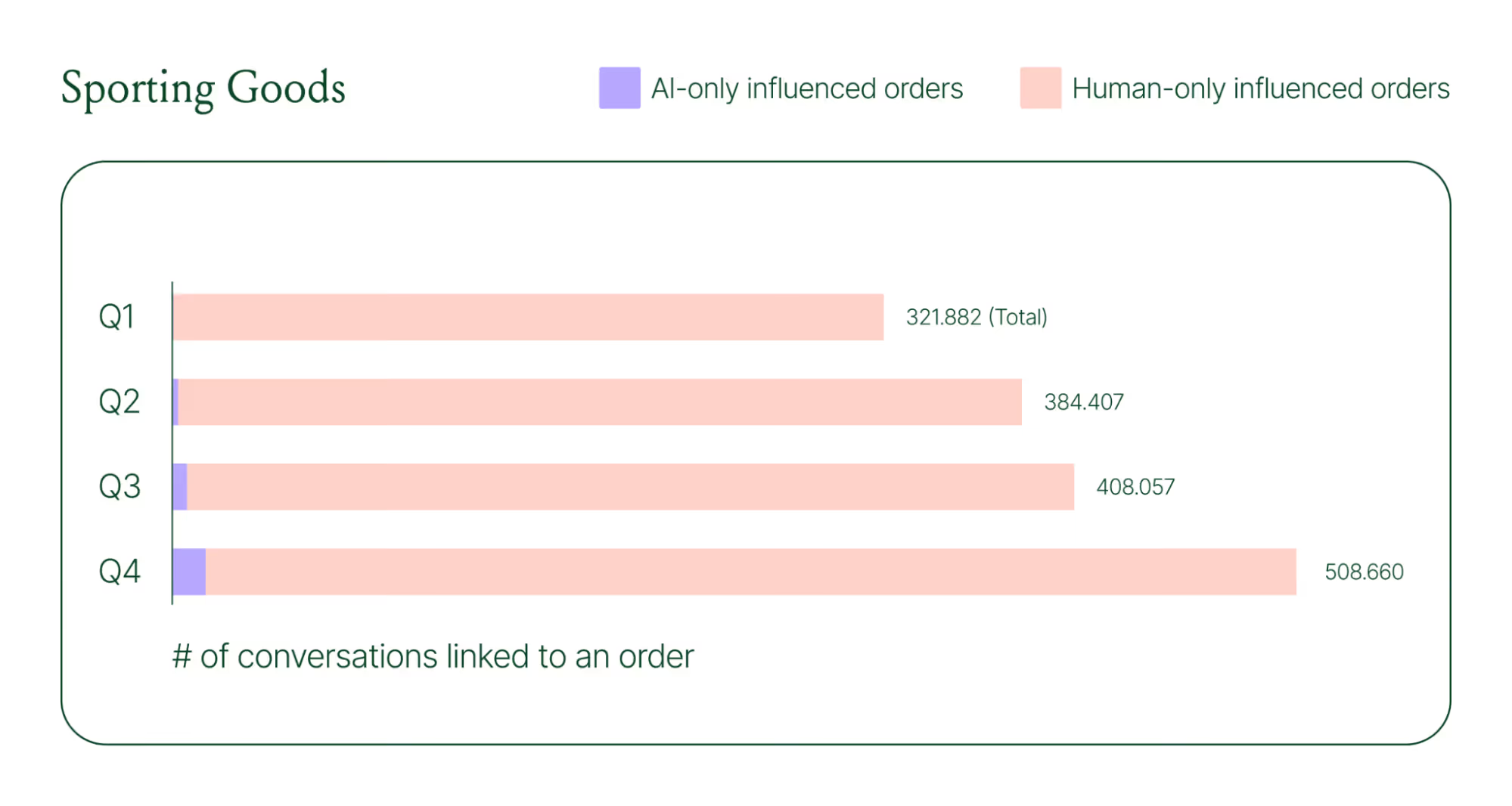

Looking at AI-only influenced orders across key verticals like Apparel and Accessories, Food and Beverages, Health and Beauty, Home and Garden, and Sporting Goods, the growth across a single year was significant.

Across industries, ecommerce brands saw AI step into conversations, reduce shopper hesitation, and drive higher QoQ conversion rates.

Learn more about AI-powered revenue generation in the full 2026 Conversational Commerce Report.

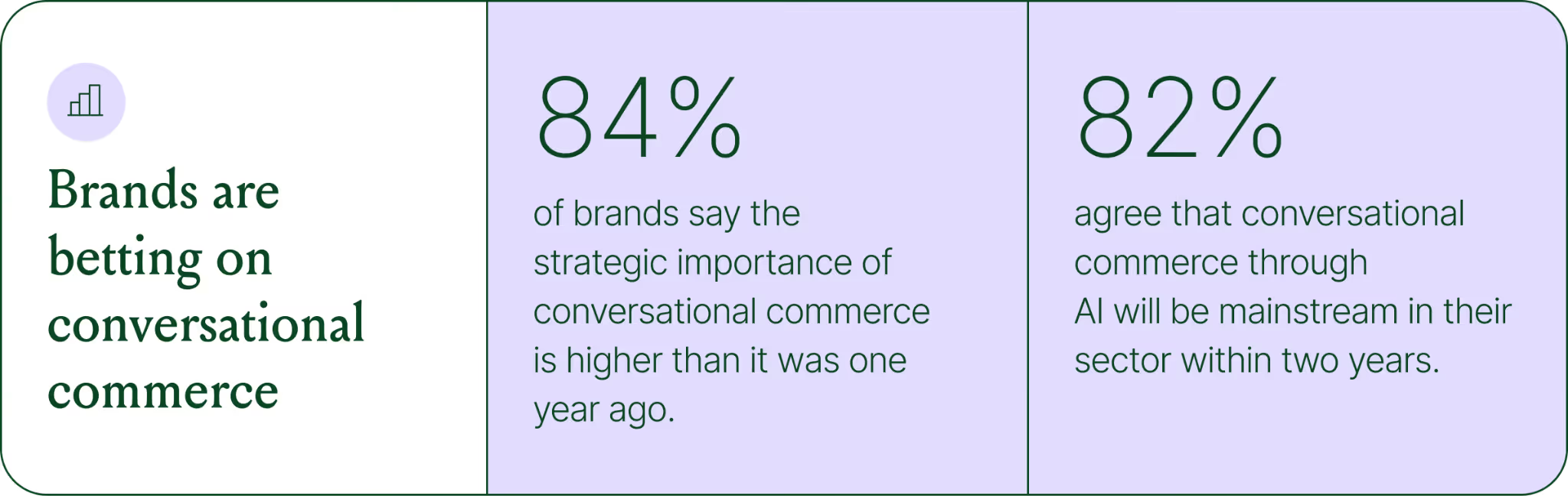

Why brands are making this a strategic priority

84% of brands say the strategic importance of conversational commerce is higher than it was a year ago. 82% agree it will be mainstream in their sector within two years.

That shift is registering at the leadership level because of what conversational commerce does to the buying experience. Creating one-to-one touchpoints earlier in the journey drives higher AOV, shorter buying cycles, and stronger purchase rates. Shoppers who get real-time answers to their questions are more confident.

What this looks like in practice: TUSHY

TUSHY, known for eco-friendly bidets and bathroom essentials, is a useful example of what happens when you take conversational commerce seriously.

Bidets aren't an impulse purchase. Shoppers have real questions about fit, compatibility, and installation. Those questions used to go unanswered until the CX team could respond, often after the customer had abandoned the cart.

TUSHY used Gorgias's AI Agent and shopping assistant capabilities to automate pre-sales support. AI Agent engaged shoppers in real-time conversations, addressed their concerns directly, and built confidence at the moment of highest intent.

This resulted in a 190% increase in chat-based purchases, a 13x return on investment, and twice the purchase rate of human agents.

How to apply this to your strategy

You don't need to overhaul your entire operation to start seeing results. The most effective approach is to start where the impact is clearest and expand from there.

A few places to begin:

- Pre-sales chat. Identify your most common pre-purchase questions (sizing, compatibility, shipping timelines) and ensure your AI can answer them confidently and promptly.

- Product page engagement. Use proactive chat prompts triggered by page behavior to start conversations before shoppers leave.

- Post-purchase follow-up. Let AI pick up the conversation after checkout with order updates and proactive support, reducing inbound volume and building trust.

- Human escalation. Define clearly which situations require a human agent – complex issues, emotional exchanges, high-stakes decisions.

Want to see the full picture of where conversational commerce is headed in 2026? Read the full report to explore the data, trends, and strategies shaping the next era of ecommerce.

{{lead-magnet-1}}

The State of Conversational Commerce: 5 Trends Reshaping Ecommerce in 2026

TL;DR:

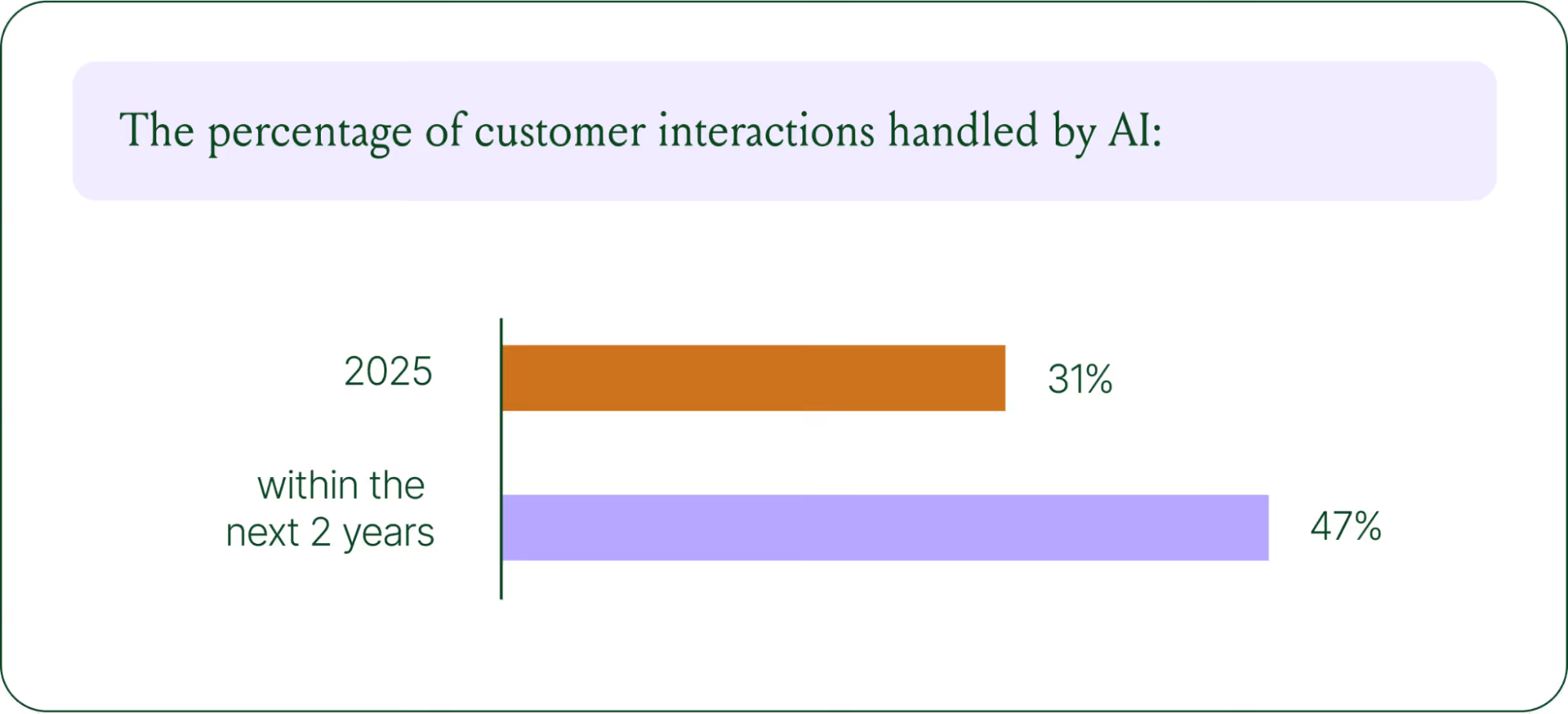

- AI is resolving tickets, not just replying. AI now handles 31% of customer interactions for ecommerce brands, and that number is expected to nearly double within two years.

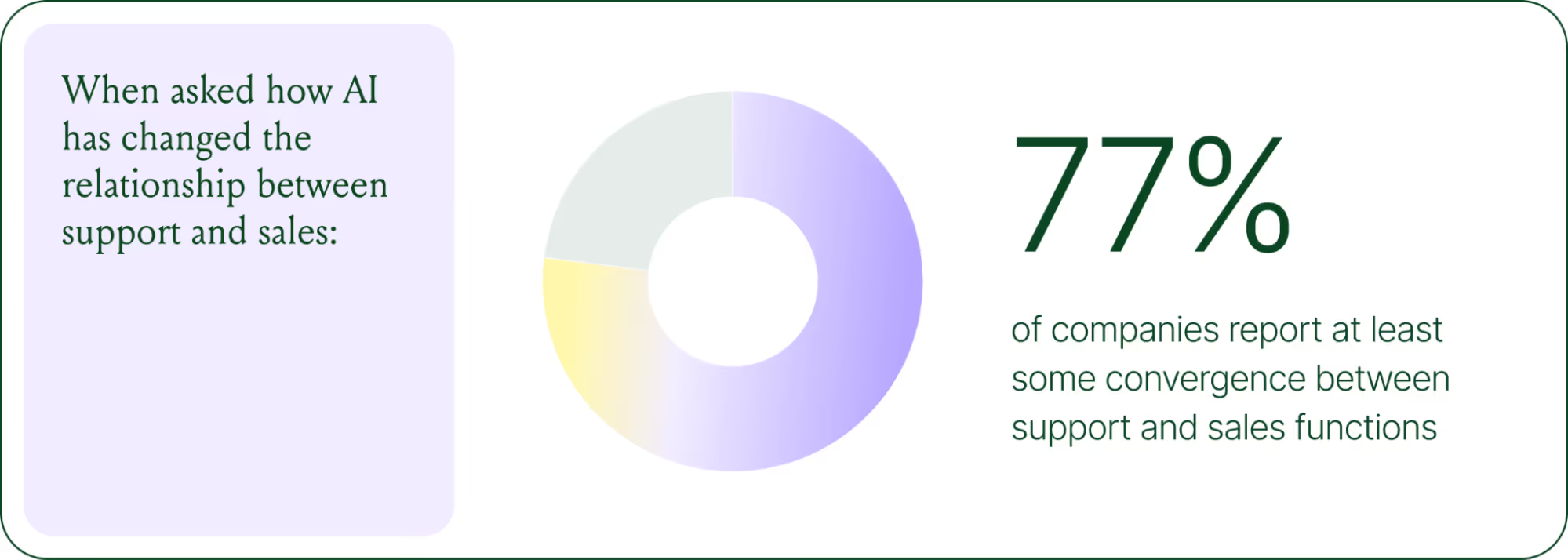

- Every channel is becoming a storefront. Conversations are replacing the traditional browse-and-buy journey, with 79% of brands reporting sales from AI-driven interactions.

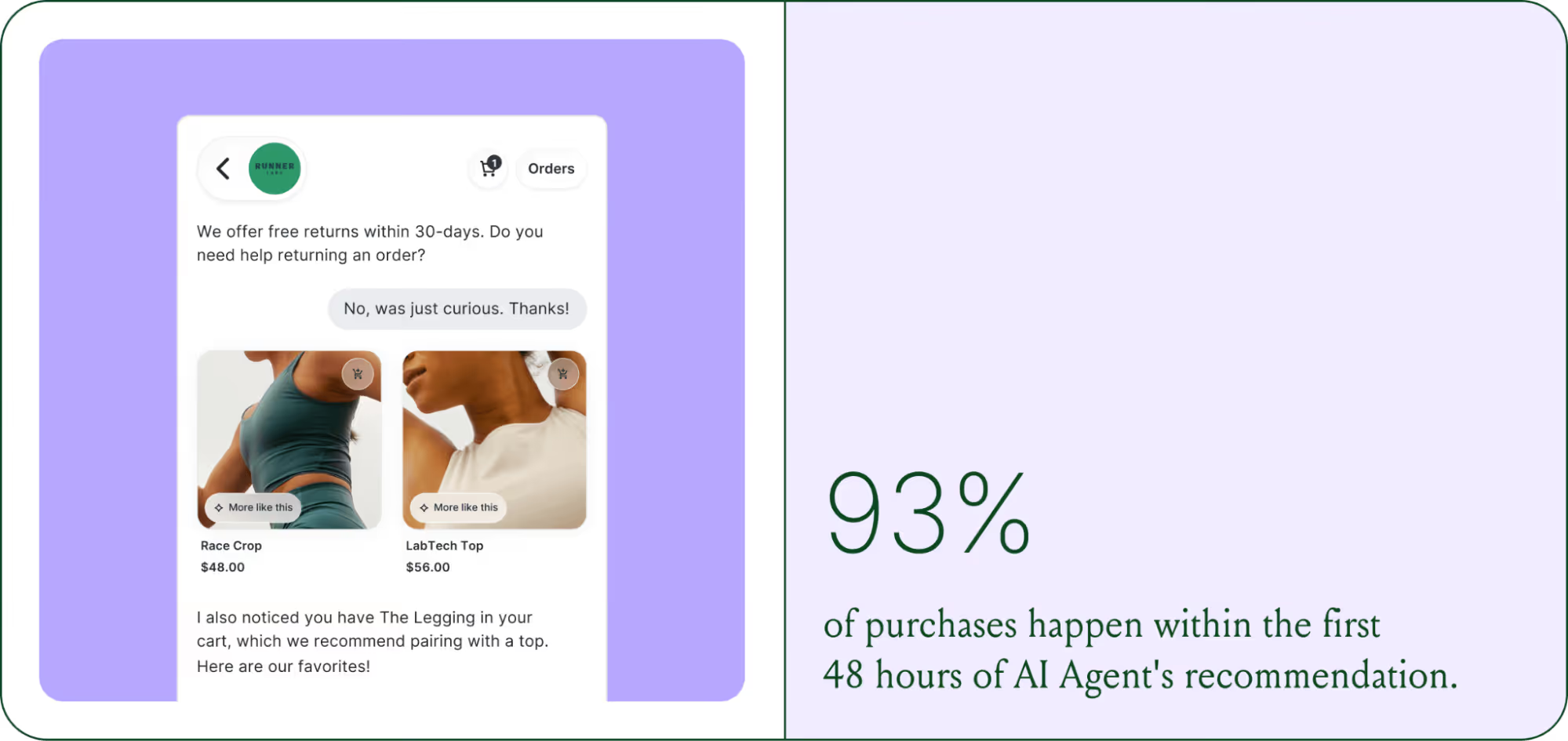

- AI is shortening the buying cycle. 93% of AI-influenced purchases happen within the first 48 hours of the conversation.

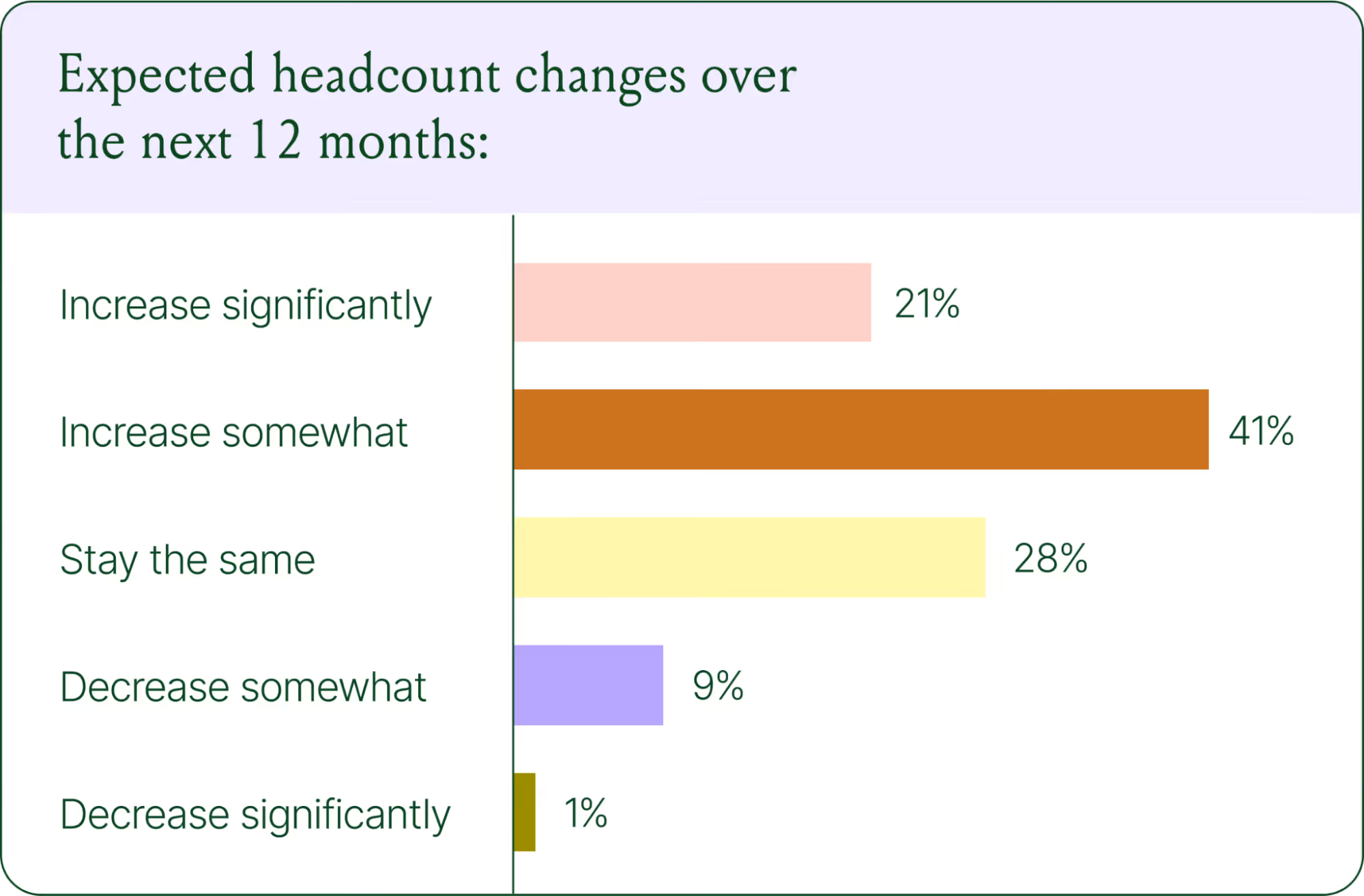

- CX teams are changing, not shrinking. Ecommerce brands are actively hiring for more technical roles to implement, coach, and maintain AI.

- The winning model is hybrid. AI handles volume and speed, while humans handle complexity and judgment.

The way shoppers buy online has shifted and customers are at the center.

They no longer want to scroll through product pages, dig through FAQs, or wait 24 hours for an email reply. They open a conversation, ask a specific question, and expect a useful answer in seconds. Brands that can’t deliver these experiences at scale are seeing customer hesitation turn into abandoned carts and lost revenue.

This shift has a name: conversational commerce. It's the practice of using real-time, two-way conversations as your primary sales channel, through chat, AI agents, messaging apps, and voice.

What started as an experiment for early adopters has become a key growth lever, with 84% of ecommerce brands treating conversational commerce as a strategic pillar this year vs. last year.

We surveyed 400 ecommerce decision-makers across North America, the U.K., and Europe to understand how conversational commerce and AI are reshaping the ecommerce landscape. These findings are complemented by aggregated and anonymized internal Gorgias platform data from 16,000+ ecommerce brands.

The State of Conversational Commerce in 2026 trends report breaks down all of the findings, including five key trends shaping the ecommerce landscape.

{{lead-magnet-1}}

Trend 1: AI is table stakes for ecommerce and it’s no longer just about efficiency

A few years ago, adding an AI chatbot to your site that could provide tracking links and Help Center article recommendations was a differentiator. Today, it's table stakes. McKinsey found that 71% of shoppers expect personalized experiences, and 76% get frustrated when they don't get them.

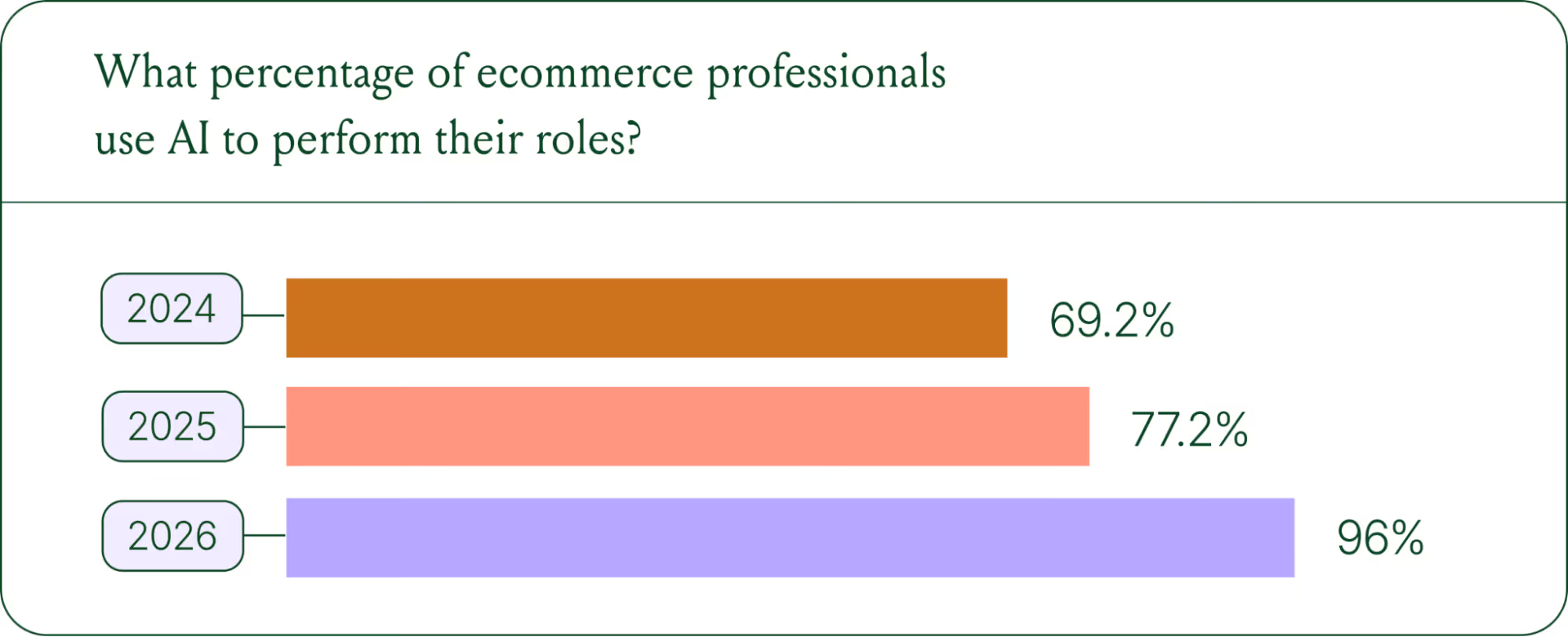

Right now, most ecommerce professionals use AI, with 93% having used it for at least 1 year. Enthusiasm is accelerating quickly, with only 30% of ecommerce professionals rating their excitement for AI at 10/10 in April 2025. Similarly, while AI adoption rose steadily year over year, it reached a clear peak in 2026.

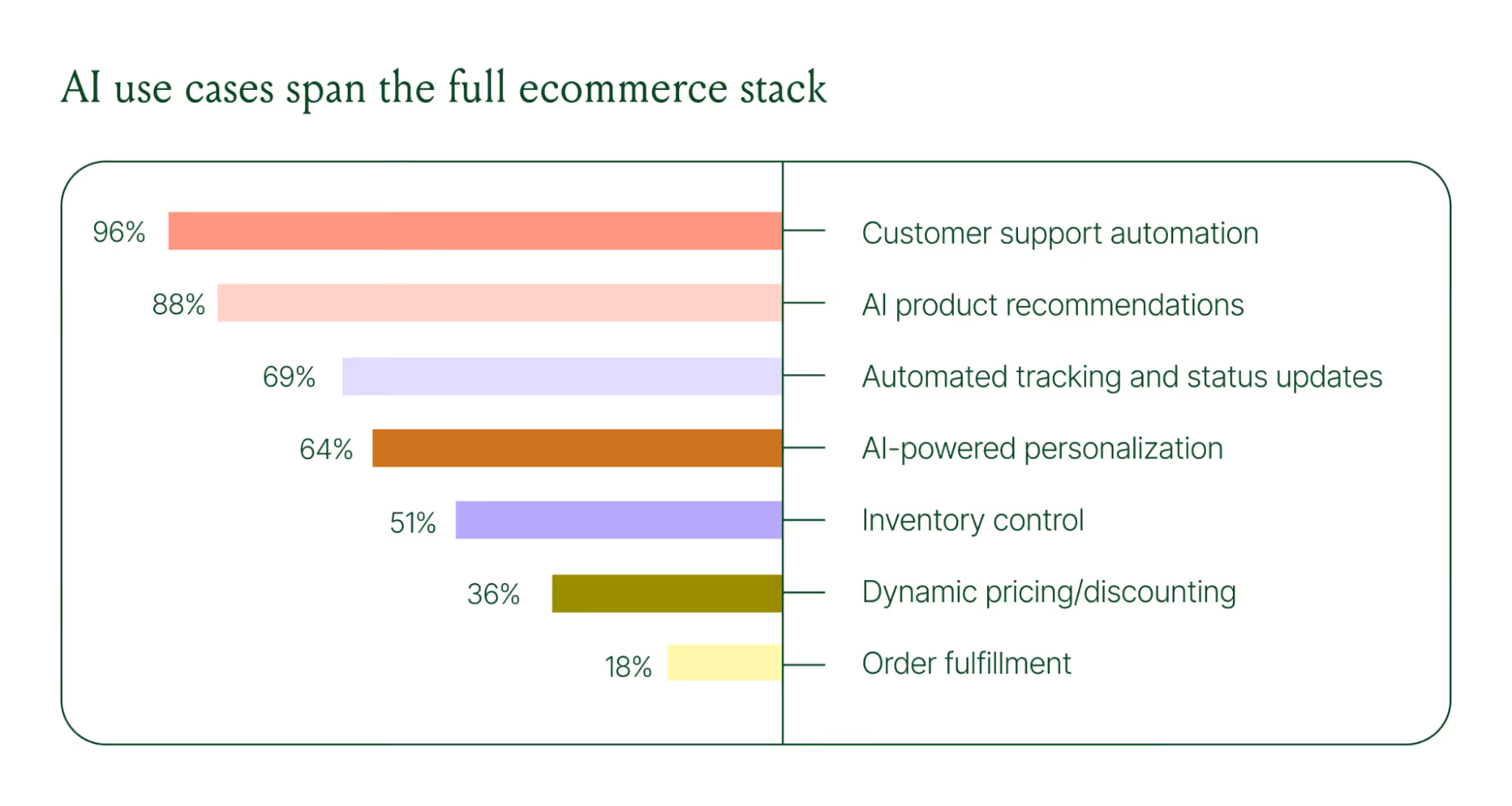

The use cases driving this adoption are practical and high-volume:

- Order tracking and status updates

- Returns, exchanges, and refund requests

- Shipping FAQs and delivery estimates

These are the tickets that flood brands’ inboxes every day. AI agents resolve them instantly, without pulling teams away from conversations that actually require human judgment.

Explore AI adoption and use case data in more depth in the full report.

Trend 2: Conversations are the new path to checkout

The traditional ecommerce funnel, visit site, browse products, add to cart, check out, is losing ground. Shoppers now discover products on Instagram, ask questions via direct message, and complete purchases without ever visiting a website.

Conversational AI is actively increasing revenue, with 79% of brands reporting that AI-driven interactions have increased sales and conversion in their business.

The practical implication is that every channel is becoming a storefront. Creating personalized touchpoints with customers earlier in the journey, through proactive engagement, is impacting the bottom line.

Read the full report to explore how AI conversions have increased QoQ by industry.

Trend 3: AI is accelerating the purchase cycle

Pre-purchase hesitation is one of the biggest conversion killers in ecommerce. A shopper lands on your product page, has a question about sizing or compatibility, can't find the answer quickly, and leaves. That's a lost sale that had nothing to do with your product.

Conversational AI changes that dynamic. When a shopper can ask a question and get an accurate, personalized answer in real time, the friction disappears.

Brands using Gorgias saw this play out at scale in 2025. When AI Agent recommended a product, 80% of the resulting purchases happened the same day, and 13% happened the next day.

Brands are further accelerating the buying cycle through proactive engagement. On-site features such as suggested product questions, recommendations triggered by search results, and “Ask Anything” input bars drove 50% of conversation-driven purchases during BFCM 2025.

Explore how AI is collapsing the purchase cycle in Trend 3 of the report.

Trend 4: AI is making CX teams more technical

There's a persistent narrative that AI is making CX teams redundant. The data tells a different story. 62% of ecommerce brands are planning to grow their teams, not cut them. But the scope of those teams is changing.

New roles are emerging around AI configuration and quality assurance. Teams are investing in technical members to write AI Guidance instructions, develop tone-of-voice instructions, and continuously QA results.

CX teams are also bridging the gap between support goals and revenue goals, as the two functions increasingly overlap.

The result is CX teams that are more technical than they were before. Agents who once spent their days answering repetitive tickets are now spending that time on higher-value work: complex escalations, VIP customer relationships, and improving the AI systems and knowledge bases that handle the volume.

Learn more about the evolution of CX roles in Trend #4.

Trend 5: The future is hybrid: AI-first, humans when it counts

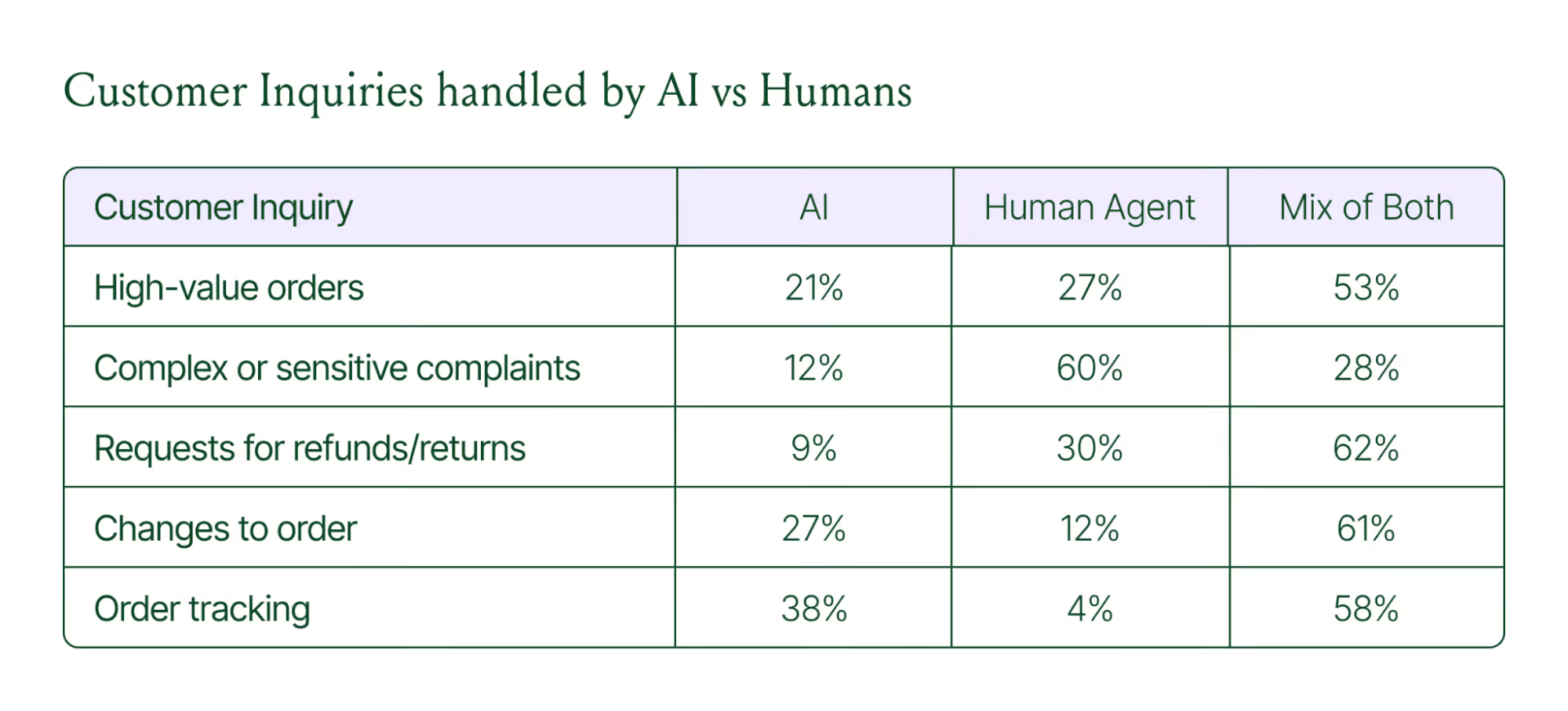

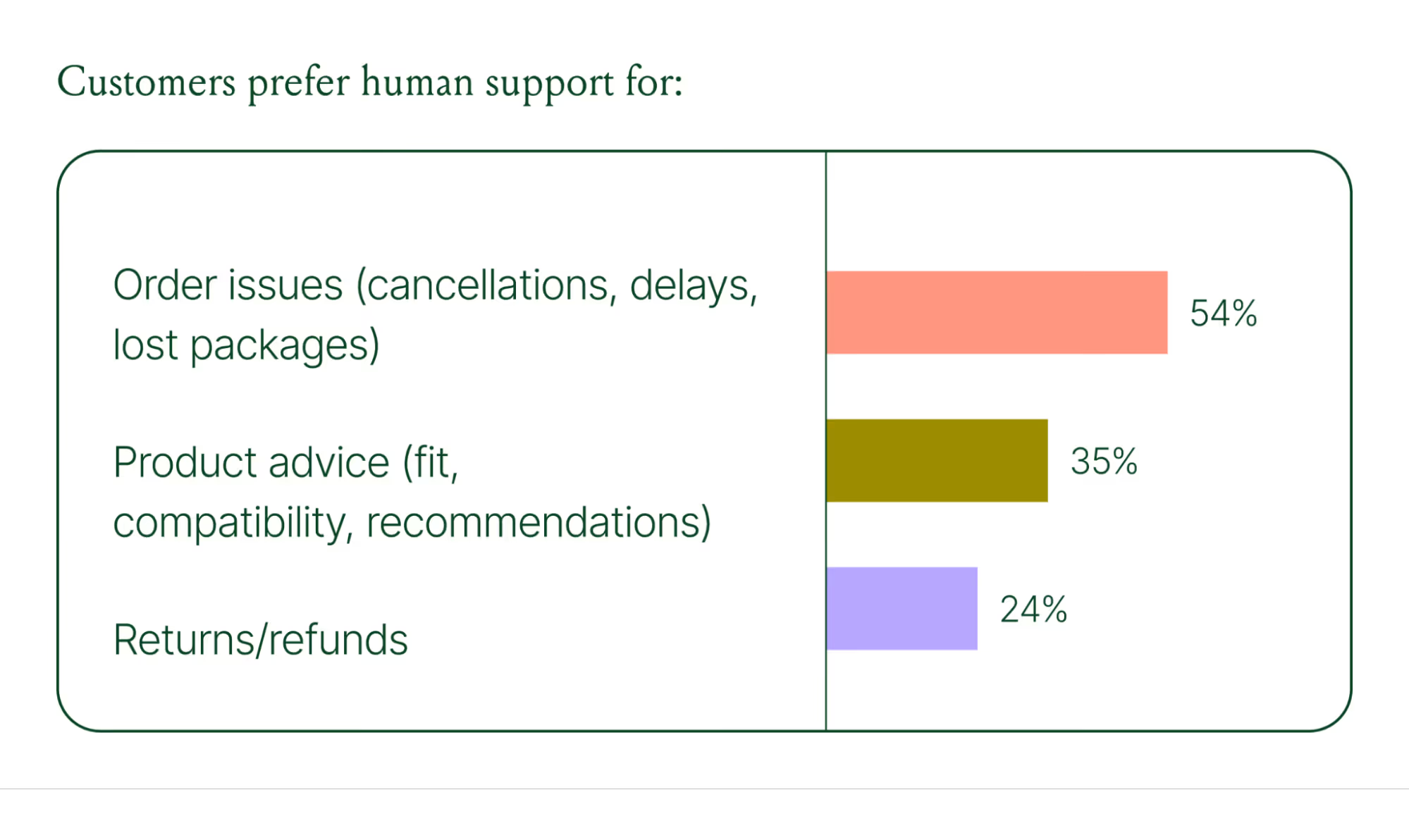

Despite increasing AI adoption, data shows that ecommerce brands shouldn’t strive for 100% automation. Winning brands are building systems in which AI handles repetitive tier-1 tickets, and humans handle complex, sensitive cases.

AI handles speed and scale. It resolves order-tracking requests at 2 a.m., processes return-eligibility checks in seconds, and answers the same shipping question for the thousandth time without compromising quality.

Human agents handle conversations that require context, empathy, or decisions that fall outside the standard playbook. There are several topics where shoppers still prefer human support.

Successful hybrid systems require continuous iteration, meaning reviewing handover topics, Guidance, and reviewing AI tickets on a weekly basis.

Discover how leading brands are balancing human and AI systems in Trend #5.

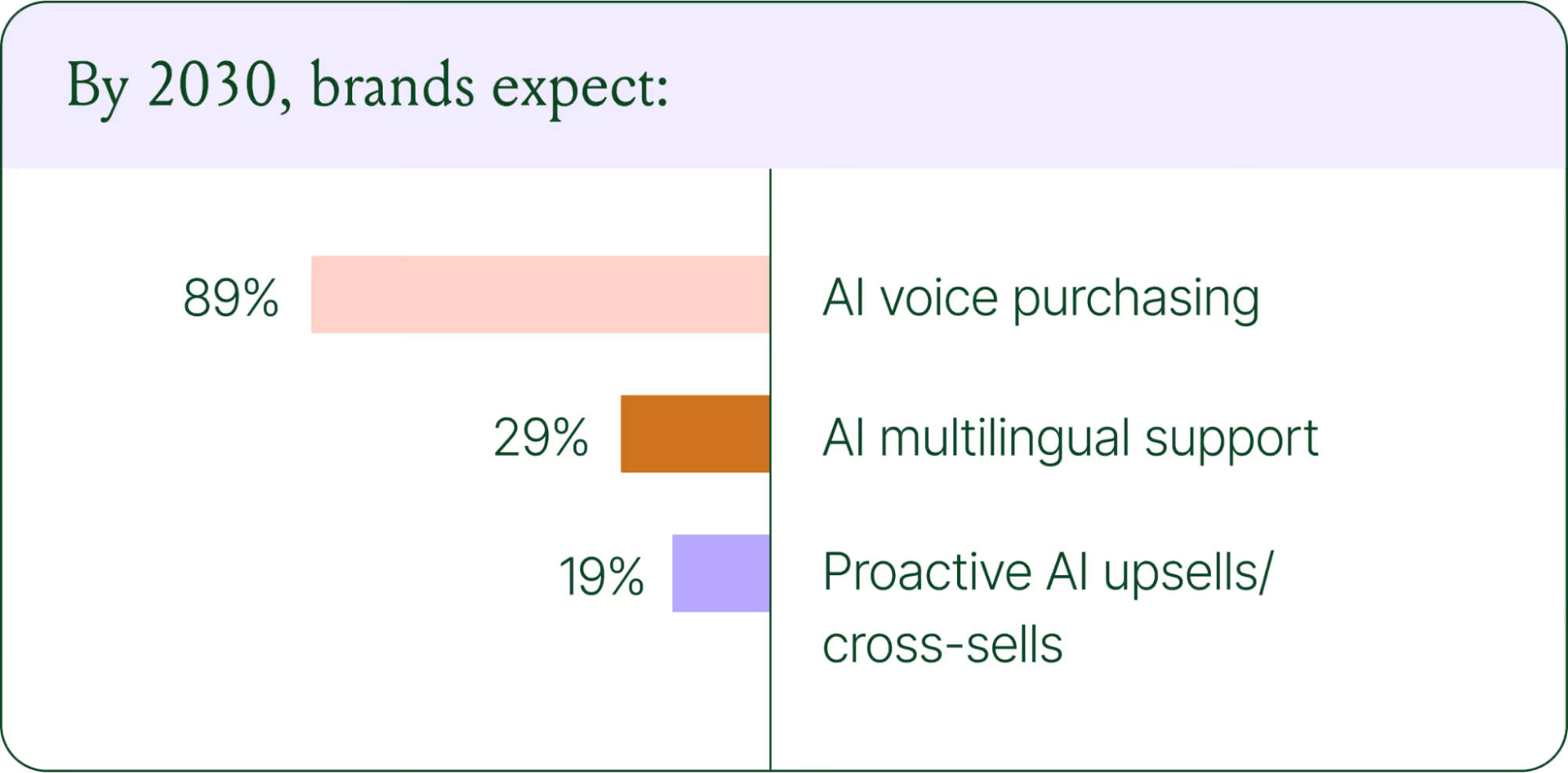

Where conversational commerce is heading by 2030

The 2026 trends are about expansion and standardization. The 2030 predictions are about what comes next.

Voice-based purchasing is the biggest bet on the horizon. Only 7% of brands currently use voice assistants for commerce, but 89% expect it to be standard by 2030. The vision is a customer who can reorder a product, check their subscription status, or manage a return entirely over the phone.

Proactive AI is the other major shift. Rather than waiting for a customer to reach out, AI will anticipate needs based on browsing behavior, purchase history, and where someone is in their relationship with your brand. Think of it as the digital equivalent of a sales associate who remembers what you bought last time and knows what you're likely to need next.

Explore where ecommerce brands are allocating their AI budgets in the full report.

Start building your conversational commerce strategy today

The brands winning in 2026 are creating smart, scalable systems where AIhandles volume and humans handle nuance. They’re treating every conversational channel as an opportunity to serve and sell.

The data is clear: AI adoption is accelerating, customer expectations are rising, and the revenue impact of getting this right is measurable.

{{lead-magnet-1}}

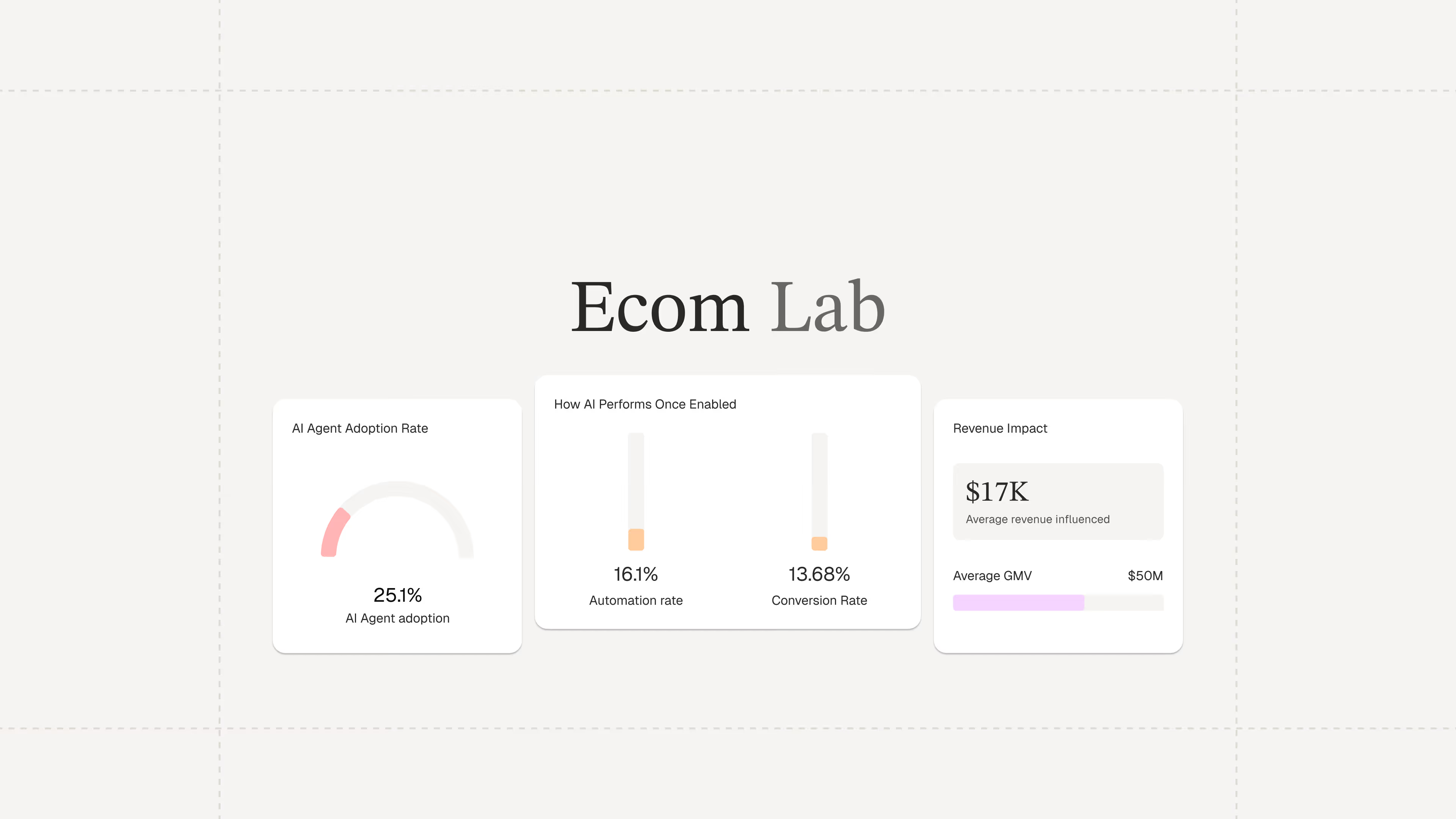

Ecommerce Finally Has a Research Hub Built on Real Data

TL;DR:

- The Ecom Lab is Gorgias’s public research hub for ecommerce insights. It shares real, first-party data to help teams understand industry performance and trends.

- It exists to solve the lack of reliable ecommerce benchmarks. Most available data is self-reported or too broad, making it hard for teams to accurately measure performance.

- The goal is to give ecommerce teams a clear baseline for smarter decisions. With real benchmarks, you can better evaluate performance and opportunities.

- The Ecom Lab makes metrics like AI adoption, response times, and CSAT visible. These are segmented by brand size, GMV, and vertical so you can benchmark more precisely.

- The latest reports reveal major gaps in AI adoption and benchmarking practices. They also highlight how inefficient support processes are driving costs.

Industry benchmarks for ecommerce are hard to come by. Most of what's out there is self-reported, survey-based, or too aggregated to be usable. Teams are left wondering whether their AI adoption is on par with industry standards or if their response times are costing them revenue.

That's a gap we're in a unique position to close.

Gorgias processes millions of customer conversations across thousands of ecommerce brands every day. This has given us a rare, unfiltered view into how the industry operates. But until now, we’ve kept those insights largely internal.

Today, we're making it public with the Ecom Lab.

The result is years of first-party data from thousands of ecommerce brands, packaged into findings that give teams a real foundation to build their strategy on.

What is the Ecom Lab?

The Ecom Lab is Gorgias's public research hub for ecommerce. It publishes insights and reports on AI adoption, support performance, financial impact, and industry trends.

The goal is simple: give teams a real baseline to measure against and to uncover the industry's inner workings.

What data can you find in the Ecom Lab?

Metrics that actually move decisions.

The Ecom Lab publishes metrics that matter to ecommerce professionals, including AI adoption rates, first response times, CSAT scores, conversion rates, and ticket intents, all broken down by brand size, GMV tier, and industry vertical.

For the first time, teams can see exactly where they stand in comparison to the broader market.

Read the first three reports now

AI is Everywhere reveals why roughly 4 in 5 ecommerce brands still haven't deployed AI in customer-facing support.

Stop Benchmarking Against the Average argues that support teams should benchmark response times against their specific industry vertical rather than the overall average.

Most Brands are Overpaying for Support breaks down the actual cost of support ticket volume and what happens when AI handles the load.

Further reading

Playbook: How Berkey Filters Drove Customer Adoption of a New SMS Support Channel

When your company decides to launch a new support channel — usually for efficiency and customer convenience — setting it up is only half the battle. The other half is driving customers toward the new channel (and away from your old ones). Without a concerted effort for customer adoption, you risk paying for a support channel that nobody uses.

Berkey Filters, a world leader in water purification and seller of water filter systems, wanted to add SMS as a support channel for their shoppers. SMS is more convenient for on-the-go shoppers and allows agents to provide service to multiple shoppers more efficiently than other channels.

Berkey Filters launched SMS with Klaviyo, and wanted wanted to add the Klaviyo SMS integration to Gorgias to unify customer conversations in one platform.

The launch was one of the most successful we’ve seen to date, both in terms of ticket efficiency and customer adoption. Within a month of launching SMS, Berkey Filters:

- Achieved a 2-minute average first-response time for SMS

- Achieved a 20-minute average handle time for SMS

- Converted 2% of tickets to SMS from more time-consuming channels

- Decreased time-consuming phone tickets by 23%

- Decreased time-consuming email tickets by 21%

We sat down with Jessica, the Gorgias account owner and Customer Experience Analyst for Berkey Filters, to ask how Berkey Filters achieved such suburb support stats so quickly. Jessica was generously willing to share her strategies to drive customer adoption of the new support channel.

In this Playbook, learn about the six tactics Berkey Filters used to launch SMS, increase the number of customers using this channel, and decrease ticket volume on older channels.

Why add SMS to your helpdesk in the first place?

SMS is one of the fastest-growing support channels today. It’s one of five channels consumers expect from brands, alongside email, website, voice, and chat.

Consumers love SMS because it’s fast, convenient, and always with them (even on the go). They don’t need to block off time in their day to sit by their laptop or on the phone to deal with a support situation. They can carry about their day and effortlessly reply to texts whenever they have a moment – something most people already do.

Support managers love direct messaging channels because conversations are typically shorter and resolved faster. And as long as SMS tickets are managed in the same places as other channels, it’s easy for agents to manage.

Jessica was specifically interested in using the SMS channel in Gorgias for Berkey Filters to achieve the following goals:

- Decrease the number of phone calls they received (because agents can only talk to one person at a time)

- Decrease the number of times a customer reaches out across multiple channels for the same issue (because faster response times on SMS would make it less likely for duplicate tickets to come in)

- Let customers using mobile — which accounts for half of their website visits — contact customer service on the device they’re already using

- Easily send photos back and forth with customers (especially because they frequently get questions about how to fix or store their products)

- Offer a channel that doesn’t require a stable WiFi connection (because they have customers all over the country, including in rural areas that don’t always have reliable service)

Jessica’s team also views SMS as a modern support channel. More and more brands want to offer a customer service experience that’s seamlessly integrated into the shopper’s day, and Berkey wanted to be an early adopter.

While some of these benefits are pretty applicable to any store, make sure you’re clear on your “why” before adding a new support channels. This will help you know how to prioritize it compared to other channels and justify the work that goes into adding a new method of communication with your customers.

How to add Gorgias SMS to your helpdesk

For the purposes of this playbook, we’ll assume you’ve already created your Gorgias helpdesk. If you haven’t, get started with a free trial or schedule a call with our team for a personalized demo.

Gorgias SMS allows you to send and receive 1:1 SMS and MMS messages with your customers. To add it, go to Settings > Integrations > SMS.

You’ll need a Gorgias phone number to get started. If you have one already (likely because you use Gorgias voice support), you can add the SMS integration without changing numbers. If you do not have a number yet, it’ll prompt you to create one first.

If you already have a phone number but it isn’t owned by Gorgias, you’ll need to port it. Learn how in this help doc.

If you’ve just added SMS (or any new channel), there are a few administrative tasks we recommend before following the steps outlined this playbook:

- Create a dedicated view for SMS tickets

- Set up routing Rules for SMS tickets

- Create SMS-specific Macros (SMS messages should probably be shorter than some of your other channels, for example)

- Review existing Rules to add or exclude SMS as a channel trigger

Now, we’ll share exactly how Jessica promoted SMS for Berkey Filters customers.

{{lead-magnet-1}}

6 steps to drive customer adoption of your new SMS channel (inspired by Berkey Filters)

Jessica knew they would eventually add their SMS number directly on the Berkey Filters website, but she also knew she’d have to wait for her developer to do so. In the meantime, she started with the tools available to her in Gorgias.

Here are six tactics Jessica used to drive adoption of the newly launched support channel:

- Launch a Gorgias Chat Campaign on the Berkey Filters “Contact us” page

- Customize the auto-reply that was sent to new email tickets

- Add the SMS number directly on the “Contact us” page

- Promote SMS in the top banner of the website

- Leverage their 2 min. first response time (FRT) for SMS in messaging

- Maintain that FRT with an SLA view in Gorgias

Let’s break each of these down.

1) Launch a Gorgias Chat Campaign on the Berkey Filters “Contact us” page

Even though Jessica would need to wait for her developer to update the actual page content, she knew she could launch a Gorgias Chat Campaign on the “Contact us” page to announce they now offer support via SMS. (If you don’t know, a Chat Campaign is a live chat session that automatically and proactively triggers for targeted website visitors, often to announce special promotions.)

Here’s what their campaign looked like:

Jessica’s campaign automatically opens a live chat box announcing the launch of SMS for anyone who stays on the Berkey Filters contact page for longer than 30 seconds. That time frame is a good way to target anyone who’s clearly trying to identify the best contact method, and not someone who accidentally clicked onto the page (and would likely bounce before 30 seconds).

To create a Chat Campaign in Gorgias, go to Settings > Integrations > Chat and select the chat widget you want to use. Click the “Create Campaign” button in the top right.

From here, you can enter the URL(s) the campaign should appear on, set a required time spent on the page, and customize the message that displays.

Read this help doc to learn more about chat campaigns.

2) Customize the auto-reply on new email tickets

One of the best ways to tell customers about a new support channel is to promote it on one of your existing channels — especially to customers who are already accustomed to those existing channels and may never visit the contact page again.

For the segment of customers who already use email to contact support, Jessica leveraged the initial auto-reply that Berkey Filters sends when a customer emails them to announce the new, faster channel.

In addition to the standard, “Thanks for contacting us! An agent will reply back shortly,” Jessica added, “We are currently experiencing high contact volumes and will be responding as quickly as possible. Our chat and text response times are typically faster. We are now accepting text messages at 1-800-350-4170.”

By customizing the auto-reply to promote the new channel, Jessica met Berkey Filters’ customers where they were to make sure they knew about the latest and greatest way to get support.

3) Add the SMS number directly on the “Contact us” page

At this point, Jessica got developer support to add the support phone number to the website. The contact page is a natural location to add any new support channels, because you know new customers will go there looking for contact information.

Here’s what the Berkey Filters “Contact us” page looks like:

When building this page, Jessica made many intentional decisions to funnel visitors toward the new channel. Specifically, she:

- Featured SMS next to another efficient (and therefore preferred) channel

- Put the phone number in the headline so it’s easy to see

- Added “Text Us” and a chat bubble visuals to clarify the number is for texting, not calls

- Pointed out that texting is great for “Conversation On The Go,” reminding people to use the channel if they plan to step away from the computer

- Set expectations by listing each channel’s average response time (ART)

The lesson? When releasing a new support channel, don’t be afraid to give extra context around it to help your shoppers understand when they should use one over the other.

4. Promote SMS in the top banner across the website

The banner at the top of the website is a high-visibility location that’s especially great for getting in front of returning customers (since they may not need to visit your contact page anymore).

Brands usually use the top banner for promotions or sales, but Berkey Filters uses it for a mix of sales and support to cater to the entire customer experience. If you refresh their website a few times, you’ll see it rotate through three messages:

- Free shipping on orders over a certain amount

- Chat now for an immediate response

- Text us for support on the go

5. Promote first response time (FRT) for SMS in messaging

You might’ve picked up on this already, but Jessica was doing something really strategic with her messaging about SMS: She was promoting their first response time of 2 minutes.

That’s fast! And therefore, a pretty compelling reason for shoppers to use it over other, slower channels like email or voice.

Now, obviously this only works if your team is achieving a fast response time like that and willing to maintain it. (More on that in the next point.)

What’s important is that Gorgias gives you insights into your support team’s performance. While that’s useful for internal planning (staffing, budgeting, etc.), we also highly recommend leveraging these data points with your own customers to show the value of the support you provide.

Support stats that are great to leverage when promoting support via SMS:

- Global first response time

- SMS first response time

- Global resolution time

- SMS resolution time

- Global CSAT score

- Total or percentage of tickets your brand has answered via SMS (if you’re still trying to increase adoption post-launch)

We don’t currently include SMS CSAT score in Gorgias reporting. If you’d like to measure and promote your SMS CSAT score, share that product feedback here!

6. Maintain that FRT with an SLA view in Gorgias

To help her team keep those impressive first response and resolution times, Jessica knew she needed to improve (and not just measure) those times. She set up a service-level agreement (SLA) view in Gorgias that shows SMS tickets that are open and were created more than one minute ago.

Here’s what that looks like:

This view sits at the top of their sidebar along with a few other SLA-based channel views, so agents can quickly prioritize what tickets they should solve next.

In addition to the view, Jessica created an Auto-Reply Rule that sends the first message to an SMS ticket.

This message thanks the customer for texting support, and states the business hours for Berkey Filters. We love how this helps set expectations right from the start, especially for customers who might text in outside of these hours. (So they don’t text again waiting for a reply!)

Here’s what that Rule looks like:

Last but not least, it’s worth mentioning that Jessica was also incredibly intentional about rolling all of this out to the Berkey Filters agents. Specifically, she involved them in the decision to launch the new channel, trained them on the new system, and made sure they were prepared before launch.

None of this would be possible if agents were unsure how to handle incoming SMS tickets or use the SLA view.

{{lead-magnet-2}}

Berkey Filters’ results from adding support via SMS

We’ve already teased some of the impact that Berkey Filters has seen since adding SMS support, but how does it all add up?

In their first 30 days using Gorgias SMS, Berkey Filters:

- Closed over 250 SMS tickets

- Sent almost 1400 messages via SMS

- Achieved a 2-minute first response time

- Achieved 20-minute resolution time

- Converted 2% of all tickets to SMS

- Decreased phone calls by 23%

- Decreased emails by 21%

That’s remarkable! And while those stats certainly speak to the high quality of their support team, they first needed to make customers aware and excited about the new channel. If you decide to launch a new support channel, we recommend following Berkey’s lead and creating an intentional adoption campaign to accompany the launch.

Get your customers excited about texting your brand

Prioritizing SMS shifts customer service conversations to a “live” channel where agents can help multiple customers at once, giving everyone a better experience.

And even if you’re strained for resources (like waiting for your developer to be able to update your store’s site) you can follow Berkey Filters’ lead and use other features and channels in Gorgias to start promoting your new channel.

Gorgias also integrates with SMS marketing platforms like Klaviyo to make texting a seamless part of your customer journey (and easy for agents to manage).

Specifically, if customers reply to an SMS sent with Klaviyo, Gorgias will create a ticket so your agents can respond right away. Plus, Klaviyo and Gorgias share customer data in real time, so you have as much information about your customers as possible in both tools:

“Having the Gorgias + Klaviyo integration has helped provide a service to our customers that we did not have before. Our customer service department is now able to provide a near-instant response via text message without having to exit Gorgias. This feature has made the entire process of getting to these tickets so effortless and much more efficient.”

— Jessica Robles, Customer Experience Analyst at Berkey Filters

To get started with Gorgias SMS, log into your helpdesk or click here to sign up for free.

What’s New with Gorgias: June 2022 Product Updates

Every month, our product team holds a casual, conversational event with our customers to demo new features, receive real-time feedback, and host live Q&As.

Watch the video below or read on for a recap of our latest product updates.

1. Admins can require Two-Factor Authentication for all users (3:18)

While Gorgias does a lot to keep your data secure, one of the best ways to add an extra layer of security is to encourage agents to use secure passwords and two-factor authentication (2FA).

And with our latest update, you can do more than just encourage. Admins can now require agents to set up two-factor authentication.

|

1. Admins can require Two-Factor Authentication for all users (3:18)

Once an admin toggles the option, all users in your account will have 14 days to set up 2FA. After 14 days, users will need to set it up to access your helpdesk.

2. Add a contact form in Gorgias Chat for a better CX outside business hours (11:38)

It can be hard to follow up with chat tickets that were left during off-business hours. Sometimes customers don’t include enough details in their message, making it harder to follow up on the next day.

Using a contact form in Gorgias Chat, you can capture a customer's email and message in a short conversational way. The contact form is designed to collect more information without disrupting the conversational experience, so you can easily follow up and help via email when you log back into Gorgias.

|

The contact form prompts your web visitors to select a subject (to help you triage tickets faster), and then provide more details about their issue so your agents know what they’re trying to solve. Last, it collects the shopper’s email address so you know who to follow-up with and where to reach them. All of this information will be collected in a single ticket in your helpdesk.

Read this help center article to learn how to enable the contact form in your Gorgias chat.

3. Create sub-categories in Help Center (19:55)

Create multiple levels of categories to help your shoppers navigate to improve help center organization and find help content for related issues more easily.

|

These new categories also give your team more options when creating a Help Center, so you can organize FAQs in whatever way makes sense for your brand.

4. New Managed Rules (For Automation Add-on Subscribers) (21:30)

Rules are a powerful feature that let Gorgias users automatically organize, tag, and reply to tickets. Thousands of Gorgias customers have adopted rules in their customer support workflow to save time and allow themselves to provide faster and higher quality service. Focusing on the common inquiries like WISMO, we’ve built Managed Rules to optimize time for Automate subscribers.

|

Managed Rules are pre-built automations developed by the Gorgias team and include some of the most common and helpful automations. They need no code, no setup. Install them from the Rule Library and you’re good to go! If we improve the Rule, it will automatically update in your helpdesk, no action from you required.

Customer Q&A (24:44)

Tune into the above timestamp if you want the full 25 minutes of customer questions and answers from our product team. Here were a few of the highlights!

With only two weeks left in Q2, which of the planned releases on the public roadmap will actually launch? (25:44)

Some features listed in Q2 of our public roadmap will indeed be released in Q2, while others will spill into Q3 (or later). We’re proud to provide transparency with our public roadmap, but please understand that it’s subject to change throughout the quarter. We do our best to update our roadmap frequently but can’t always do so right away.

Although we can’t promise to release any of these features in Q2, a few features we plan to release sooner than later include:

- FAQ article recommendations in chat

- Create a Chat report in help desk statistics

- Display agents phone availability state

Our team is actively prioritizing the roadmap for Q3 right now. Check back soon to see the latest plan!

Will you be improving the returns and cancellations workflow for the Help center? We use Loop, but can’t integrate our self-service return portal with our new Help Center. (33:40)

This is a limitation we’re definitely aware of, and are exploring options. The long-term solution is to build better integrations with Loop and other top returns platforms. If this is a feature you’d like to see, please submit the request here.

When will the option to convert phone calls to SMS be available? (45:15)

We’re hoping to release more features around that at the start of 2023. Today if you receive a phone call, you can always reply to that ticket via SMS. In the future, we’ll focus on helping you deflect the phone call entirely and prioritize SMS instead.

Join us for the next monthly product event

Thanks for checking out the recap of our June customer product event. We hold these events once as a month as a way to share the latest releases and connect with our customers in real-time. It’s a favorite – from both customers, and the Gorgias team.

If you’d like to sign up for the next one to attend live, you can register here. We’d love to have you join us!

New apps that integrate with Gorgias: Summer 2022

There are now over 85 incredible integrations in the Gorgias App Store with the tools that power your ecommerce store. While each app is unique, together these integrations can help your agents work more efficiently to provide excellent service to your customers.

Take a look at the newest additions so far from 2022.

In the first half of the year, we’ve launched 15 new integrations for your Gorgias helpdesk:

- Klaviyo (updated!)

- Gorgias SMS

- Thankful AI

- Netsuite

- Okendo

- Narvar

- Skio

- Via Software

- Clyde

- Smartrr

- ShipMonk

- Annex Cloud

- Daton

- Shogun Frontend

- Gobot

- Shop2app

Read on to learn how you can use these tools to help manage your store, and visit the Gorgias App Store to activate them today!

Klaviyo (updated early 2022)

Klaviyo is an email and SMS marketing automation platform built for ecommerce. Gorgias was the first helpdesk to connect to Klaviyo SMS, allowing your brand to create seamless conversations between your marketing campaigns, shoppers, and support team.

With the updated Klaviyo integration, you can:

- Automatically create tickets in Gorgias from replies to Klaviyo SMS campaigns

- Reply to Klaviyo SMS messages in the same place as every other customer conversation, with all the context of your ecommerce integrations

- Create contact lists in Klaviyo based on support events in Gorgias

This integration helps you streamline customer interactions and create higher-converting marketing campaigns. To learn more, go to the Gorgias App Store.

Gorgias SMS

We recently released Gorgias SMS, an easy way for your brand to offer this convenient and conversational communication channel. It’s one of the fastest-growing support channels for ecommerce brands, and one of the most reliable for customers to contact you on (since it’s not dependent on internet access).

With Gorgias SMS, you can:

- Talk to customers on their (since they likely always have their phone on them)

- View order information in the same window as SMS tickets

- Easily send photos back and forth with customers

Click here to learn more about Gorgias SMS, available with all plans.

Thankful AI

Thankful AI is a platform dedicated to helping you deliver better support for the post-purchase needs of your customers. The AI is tailored specifically for retail and ecommerce businesses, so you don’t have to worry about a disjointed experience.

With this integration, the Thankful AI agent can:

- Triage and route tickets to real agents

- Tag tickets in Gorgias

- Respond to tickets across all written channels

This frees up your agents to focus on more meaningful conversations with customers. Visit the Gorgias App Store to learn more about the Thankful integration.

NetSuite

NetSuite is a cloud ERP including financials, CRM, and ecommerce. It helps brand work more efficiently, take control of inventory and fulfillment, and bring all your tools together in a unified business management suite.

Sync NetSuite data into Gorgias to give your agents important customer & order information in a single tab.

With this integration, you can:

- Add over 60 NetSuite fields to a widget in the Gorgias Customer Sidebar.

- Sync customer information from NetSuite into Gorgias.

- Sync order information & shipping details from NetSuite into Gorgias.

- Sync RMA information from NetSuite into Gorgias.

- View the last 10 orders that have been created or modified.

This helps your agents have all the context they need next to every conversation they have. Visit the Gorgias App Store to learn more.

Okendo

Okendo is a customer marketing platform and an Official Google Reviews partner that helps brands capture and showcase high-impact social proof such as product ratings & reviews, customer photos & videos, and Q&A messageboards.

With this integration, you can:

- Automatically create tickets in Gorgias for Okendo product reviews

- Easily respond to every customer who leaves a review

- Add an Okendo widget to the Gorgias Customer Sidebar for customer loyalty insights next to every ticket

Visit the Gorgias App Store to learn more about our Okendo integration.

Narvar

Link Narvar Return & Exchanges for Shopify with Gorgias to automate returns management and get rich insights that help you save costs and improve operations.

With this integration, you can:

- Create flexible return policies. Deploy tailored returns flows, policies, and fees for different products or customer segments to offer a differentiated experience.

- Retain revenue with recommended exchanges. Convert up to 45% of refunds to exchanges by recommending exchanges of same or different value to customers right within the returns flow.

- Bring customers back by incentivizing store credit. Add a credit bonus to store credit refunds based on specific exchange rules that will incentivize customers to keep shopping in your store.

To learn more about our Narvar integration, visit the Gorgias App Store.

Skio

Skio helps brands on Shopify sell subscriptions. With this integration, you can add a Skio widget to your Customer Sidebar in Gorgias. This gives your agents insights into customer subscriptions right in the helpdesk without having to switch tabs.

With this integration, you can:

- View Skio customer information in the Gorgias Customer Sidebar

- Quickly click out to Skio right from Gorgias if needed

- Respond to subscription questions from one central location

To learn more about our Skio integration, visit the Gorgias App Store.

Via Software

Via is a mobile commerce (SMS marketing) platform for ecommerce businesses. Send personalized messages to your customers for increased revenue and customer satisfaction.

With this integration, you can:

- Automatically create tickets in Gorgias based on customer replies to Via SMS

- Respond to Via SMS messages directly from the Gorgias helpdesk

- View the entire conversation, including replies from Gorgias, in the Via platform

Visit the Gorgias App Store to learn more.

Clyde

With Clyde and Gorgias working together, you can create a seamless and positive support experience by syncing all warranty data inside your Gorgias account. Stay focused and close tickets faster by viewing Clyde contracts and claims information in the same window you use to talk to customers.

With this integration, you can:

- See the full picture of every customer

- Find open warranty claims in a flash

- Turn your support center into a profit center

Manage warranty requests & find claims information in one tool. Head to the Gorgias App Store to learn more.

Smartrr

Smartrr is a seamless, full-service subscription solution. Paired with Gorgias, you can equip your team with the best customer service tools in one convenient location to increase customer satisfaction and drive customer loyalty.

With this integration, you can:

- Sync subscription data from Smartrr in Gorgias

- Help agents stay focused in a single tab

- Provide better, faster support for subscription questions

To learn more about our Smartrr integration, go to the Gorgias App Store.

ShipMonk

ShipMonk is an order fulfillment platform for eCommerce businesses ready to scale. They offer technology-driven fulfillment solutions that enable business founders to devote more time to the things that matter most in their businesses.

With this integration you'll be able to:

- Pull order fulfillment data and tracking information from ShipMonk to Gorgias

- Access specific orders in ShipMonk directly from the Customer Sidebar in Gorgias

Learm more about the ShipMonk integration in the Gorgias App Store.

Annex Cloud

Annex Cloud is a cloud-based customer loyalty platform for enterprises. They provide integrated loyalty, engagement, and retention solutions across a range of program types like paid memberships, incentives, and more.

With this integration, you can:

- Sync customer’s loyalty data across tools

- Show loyalty information of a customer when opening a new or existing ticket

Click here to learn more about our Annex Cloud integration.

Daton

Daton can replicate Gorgias data to your data warehouse in minutes, freeing up your analysts to focus on generating important business insights instead of extracting data.

With this integration, you can sync information from Gorgias to your data warehouse like:

- Customers

- Jobs

- Users

- Tickets

To learn more about Daton, visit the listing in the Gorgias App Store.

Shogun Frontend

Shogun is a headless ecommerce platform built for merchants. Convert more with richer merchandising and sub-second store speed. The Gorgias integration allows merchants to add chat capabilities to their Shogun-powered shops.

With Gorgias chat on your Shogun Frontend, you can:

- Promote your products and provide order details, all connected to your ecommerce platform

- Create chat campaigns to proactively message customers while they’re shopping live on your site

- Have agents answer live chats or create customizable, automated flows to free up agents for the most important conversations

Click here to learn more about our integration with Shogun Frontend.

Gobot

Gobot helps fast-growing Shopify stores convert more shoppers and reduce support burden with beautiful guided selling quizzes and AI-powered support chatbots.

With this integration, you can:

- Sync recommendation data from Gobot into Gorgias so reps can respond and address questions via chat or email.

- Collect post-purchase survey data and automatically connect select customers with questions/feedback to support reps in Gorgias.

- Automate repetitive customer support inquiries with Gobot’s AI Support Automation and seamlessly transition to Gorgias live chat or email support for those who require human assistance.

Visit the Gobot listing in the Gorgias App Store to learn more.

Shop2app

Shop2app is a mobile app builder. It’s designed for local delivery, national delivery, and in-store pickup, and also makes it easy to manage subscriptions and send push notifications to customers.

With this integration, you can:

- Automatically create tickets in Gorgias when customers contact a merchant from their mobile app.

- Allow customers to initiate a support ticket directly from past orders within the mobile app.

Visit the Shop2app listing in the Gorgias App Store to learn more.

Supercharge Gorgias, your CX command center

The Gorgias App Store features 85+ high-quality integrations with other leading ecommerce tools. By connecting the apps that power your store, you can give your agents the context they need to provide remarkable customer service from a single workspace. (No more switching tabs!)

To add any of these apps to your helpdesk, go to Settings > Integrations or visit the Gorgias App Store.

What’s New With Gorgias – May 2022 Product Updates

Each month, our product team holds a casual, conversational event with our customers to demo new features, receive real-time feedback, and answer live Q&As.

Watch the video recap here, or read on for a recap of the latest releases.

1. SMS is officially live for all accounts (3:10)

With this new channel, you can receive and respond to SMS and MMS messages within Gorgias. This makes it easy for your customers to communicate with your store while they’re on the go, and easy for your agents to provide fast, conversational support.

We’re releasing SMS this quarter as a free trial for every customer on every plan. Conversations will count toward your plan’s ticket count, but there are no additional charges for minutes, usage, phone numbers, etc. In the coming months, we’ll be assessing the best way to provide Voice and SMS so we can continue to innovate and build powerful new features for these channels.

2. SMS Pro-tip: Create a Gorgias Rule to set up a double opt-in (11:55)

If you want customers to consent to receive SMS messages before your agents actually reply, you can do this with a simple Rule in Gorgias. Here’s what it would look like:

Read this article for four more Gorgias Rules to help automate SMS.

3. Agents will now receive browser notifications when a ticket is assigned to them (14:55)

This is especially great for anyone who gets tickets assigned to them, but may not be looking at Gorgias throughout their entire workday. (Think managers, social media collaborators, etc.)

To see these notifications, you may need to adjust your browser and/or computer settings. You can see an example for Chrome + Mac in our official Product Update.

4. Quick response flows in self-service got a revamp (21:48)

Quick response flows bring in a critical component to self-service, creating more ways to engage with shoppers who visit your store online. We designed quick response flows with the guidance that 60% of the time, customers use chat to ask pre-purchase questions. Most successful merchants leverage their FAQ content to prompt conversation with quick response flows that result in generating revenue, trust and loyalty.

If you haven’t yet activated quick response flows, you’re in for a treat. With this revamp, you can now easily manipulate every step of the experience for quick response flows from self-service settings. Immediately under the Quick Response Flows tab, you can write in any question and answer you prefer and hit save. There is no other place or screen you’d need to navigate. Using the preview on the right, you can reassure the quality of the experience you want to create for your customers.

If customers click on a quick response flow and find the information they need, this will not count towards your monthly ticket volume.

If they click on a quick response flow and select “No, I need more help” option, it will create a ticket for an agent to address.

Best practices

It’s amazing when our merchants start using a feature and take it to the next level. We’ve seen some of the best practices to include creating unique tags for each quick response flow created (e.g. Quick_Response_Flow_1), then adding a corresponding view in Tickets. This way, you can track closely the conversations prompted by quick response flows and dedicate a select group of agents who are trained to expand on the subject and help your customers become fans. For more on this subject, check out Quick Response Flows help doc here.

Customer Q&A (26:50)

Tune into that timestamp if you want the full 25 minutes of customer-led questions and answers from our product team. Here were a few of the highlights!

Gorgias Phone vs Aircall: What are the differences? What’s the timeline for improvement for Gorgias Voice? (32:40)

Gorgias phone is an easy way to add a basic phone line to your store. If you’re looking for advanced, full call center features, our partners like Aircall or RingCentral may be a better solution for you.

For example, their phone-specific statistics are more in-depth than ours, but the ability to create a phone number and answer it in the Gorgias helpdesk is naturally easier with Gorgias.

Our long-term vision for Gorgias Phone is not to fully compete with apps like Aircall, but rather to invest in ecommerce-specific solutions so you can provide the best voice support to your shoppers.

What’s up with WhatsApp? (37:50)

It’s our next new channel, coming Q3! We have access to the API and are ready to start building at the end of the quarter. (Just need to polish up a few existing channel bugs first.)

Any plans to integrate with Shopify Blogs? (43:15)

Not yet, but we’d love to hear more feedback about this if it’s something you’re interested in! Submit this idea on our Product Roadmap to help us prioritize it.

Join us for the next monthly product event

That completes our recap of our May customer product event. We hold these events once as a month as a way to review the latest releases and connect with our customers in real-time. It’s a favorite – from both customers, and the Gorgias team.

If you’d like to sign up for the next one to attend live, you can register here. We’d love to have you join us!

4 Benefits of Adding Voice Support to Your Ecommerce Store

Wondering if your team should add voice support to your ecommerce channels this year? You’re not alone.

Over 15% of our customers currently have a phone integration added to their account, thanks to the Gorgias Voice integration and partners like Aircall and RingCentral.

While voice support may feel like an “outdated” channel in the age of live chat and social media, this tells us that ecommerce support teams are increasingly finding value in offering it to their clients.

Here are 4 benefits of adding voice support to your ecommerce store:

- You can achieve faster first response times and faster resolution times.

- It’s easier to express empathy with customers.

- Having a phone number builds trust and brand quality.

- It makes your support more accessible.

You can achieve faster first response times and faster resolution times.

Phones are an immediate communication channel, so it’s not surprising that adding voice support can boost your first response time. What we weren’t expecting, however, was by how much:

Our customers with phones have a first response time that’s 7x faster than merchants that don’t offer voice support. (30 minutes compared to 4 hours.)

What’s even more important to note, however, is that adding voice support doesn’t decrease resolution time (like many support managers fear). In fact, it makes quite a positive impact:

Our merchants using phones have an average resolution time that’s 34% faster than customers who don’t.

So not only does this channel help you respond to customers faster, but it helps you resolve their issues faster. That means your team can work more efficiently and spend up to 66% less time resolving each ticket. (Imagine how that could help increase your store’s revenue!)

It’s easier to express empathy with customers (which can lead to better Satisfaction scores).

Talking (literally) to shoppers and hearing their tone of voice is the best way your agents can adjust their responses to create a great customer experience.

While you can do your best to read clues in email and chat, it’s always going to be easier to match the customer’s tone when actually listening to them on the phone.

And when your agents can express empathy and solve the problem accordingly, you’ve got a better chance at getting that 5-star review and positive customer feedback.

Our customers using phones have an average Satisfaction score of 4.56 out of 5.

While that score also depends a lot on your support agents and their personal approach to customer service, there’s no denying that actually speaking to clients is helpful for both parties in those moments.

Having a phone number builds trust and brand quality.

Especially if you sell high-end products or have VIP customers (like wholesalers buying in bulk), having a phone number adds a level of legitimacy to your business.

Since most online stores don’t immediately add phones as a support channel, it will stand out to customers when your shop does offer voice support.

Phones add a sense of maturity to your business (and especially if you’re using an integrated solution like Gorgias Voice), there’s not much cost involved to elevate the status of your store like this.

It makes your support more accessible.

While the internet has come a long way over the years in terms of accessibility, the truth remains that phone support may be an easier and more comfortable contact method for some of your customers than digital channels.

Test your live chat experience with a screen reader, for example. What’s the experience like? (And how does it compare to dialing a phone number and talking verbally to someone?)

If there’s a chance that voice support is more approachable for a part of your customer demographic, you’ll create a better shopping experience for them by adding a phone line.

Now that you know the benefits of phone support, how do you actually add it?

The first thing you’ll need to decide is who on your team will actually be answering the phones.

A few options to explore:

- Having your existing support agents answer phones. This is best if your agents aren’t already too busy, or you have someone who’s particularly good at verbal communication.

- Hiring a new agent(s). This is the situation many support managers find themselves in -- they want to add phones, but don’t feel like they have the right staff yet to manage it. Hiring someone new can help, but we also recommend following these tips to keep resolution times fast and phone processes efficient.

- Outsourcing phone support. If you’re expecting a large amount of call volume and don’t feel you can internally staff the team to support it, outsourcing to a call agency is always an option. This can be expensive upfront, however, so it may be best to try one of the other options and consider this as a last resort.

Next, you’ll need to choose a phone platform.

If you’re adding our built-in voice channel to your Gorgias helpdesk, all you have to do to get started is log into your Gorgias helpdesk and create a new number (or forward or port an existing one, if you happen to have one already).

Our phone integration is included in all Gorgias plans, and unlike other providers, there’s no annual contract fee and no minimum seat requirement.

This makes it a great option for teams looking to add phones for the first time or who want to manage all communication channels in one place.

Plus, our ecommerce integrations save your agents time by displaying callers’ shopping history right in the helpdesk, so they don’t have to go searching for the last order, for example.

For more tips on how to create efficient phone processes and increase resolution time by 34%, check out this article.

Finally, once you’ve set up your team and chosen your provider, all that’s left to do is make your number visible.

If you’re offering voice support for all your customers, you might place it in the footer of your website or all transactional emails.

If you’re piloting voice support or using it exclusively for a segment of shoppers, you might save it for smaller email segments or place it only on dedicated landing pages just for them.

Wherever you decide to put your number, just make sure it's easily accessible and clearly visible so your shoppers can start calling, and your support team can start delivering even better customer experiences!

Start providing SMS support today, with Gorgias

SMS is a convenient way for customers to contact your brand and receive fast support. It’s no wonder it’s one of the top five channels that consumers expect to engage with brands, alongside email, voice, website, and in-person.

Every Gorgias plan now includes two-way SMS at no additional cost, making it easy for your brand to start offering this conversational channel.

Why offer SMS support?

There are many reasons to offer customer service messaging, but here are the top four:

It’s fast and conversational

SMS is a conversational, real-time channel. The benefit of this is that customers tend to keep the conversation short and reply quickly to follow-up questions, meaning your agents can resolve the situation quickly, too.

Customers can contact you while they’re “on the go”

Most people keep their phone with them everywhere they go. With SMS, it’s easy for customers to start the conversation and follow-up as they move throughout their day, instead of feeling stuck to a chat conversation on their laptop.

It’s natural for younger customers

Sending text messages feels like you’re texting a friend, even if it’s actually between customers and your brand. Younger clientele will feel natural using this support channel, and it can even help you build that friendly-feeling into your brand perception.

It makes sending photos back and forth easy

Does your refund or return policy require photo evidence to kick off the process? If your customers ever need to send pictures of damaged items or wrong products, SMS is the perfect channel because they’re probably taking those photos on their phone anyway.

Still not sure if SMS is a support channel your brand should prioritize? Try it for 2 weeks. Because SMS is included in every Gorgias plan, it’s easy to turn off if you decide it isn’t right.

Recommended reading: Our list of 60+ fascinating customer service statistics.

How to add SMS to your helpdesk

You’ll need two things to get started with Gorgias SMS. (Don’t worry, they’re both quick!)

If you’re new here, get started on the Gorgias helpdesk. It only takes a few minutes to create an account, and you can always book a call with our sales team if you have questions.

The second is a Gorgias-owned phone number, meaning you either created it in Gorgias or ported it from your previous phone provider. You can do both of these actions in Settings > Phone Numbers.

Note: SMS is currently only available for US, UK, and Canadian numbers.

Once your phone number is ready in Gorgias, you can add the SMS integration to it. You can do this from Settings > Integrations > SMS.

Once the integration is active, you’re ready to start replying to SMS conversations from your customers.

To tell your customers they can now text your brand, we recommend adding “Text us,” plus your phone number, in some or all of these places:

- The footer of your website

- The “Contact Us” page of your website

- Your Gorgias Help Center

- Transactional emails (order confirmation, return initiated, etc.)

4 automation Rules to help you get started

Below are four top automation rules to take full advantage of SMS customer service. We also have a full guide on customer service messaging that includes templates and macros to upgrade your SMS support.

Auto-tag with “SMS”

SMS is an official channel in Gorgias, meaning you can see SMS-specific stats or create SMS-specific Views out of the box. There may be times when you also want to Tag tickets with “SMS” however, in which case you can do so with a Rule like this:

Auto-assign to a real-time team

SMS is a fast, conversational channel, so you’ll want to assign these tickets to agents that can keep up with the pace. If you have a dedicated chat team, they’ll be naturals at answering questions via SMS, as well. Here’s a Rule that will automatically assign SMS tickets to a specific team.

Auto-reply: Message received

When customers text your brand, they’ll expect a fast response. In order to buy your agents some time, we recommend sending an auto-response to let the customer know their message has been received and an agent will be with them shortly. This will also give them confidence that the text message did in fact go through, so they don’t follow-up right away.

Auto-reply: Order status

Whenever you add a new communication channel for your customers, you should consider how you’ll respond to WISMO (“Where is my order?”) questions on it. With SMS, you’ll want to keep the length of your reply in mind so you’re not sending an insanely long text message back to customers. We recommend creating a Rule that can A) make sure the reply follows the best format for SMS and B) save your agents from having to answer these WISMO questions manually.